Here's a simple way to think about it: Confirmation bias is our brain's tendency to cherry-pick information that proves we were right all along. It’s like wearing a pair of custom-made glasses that only let you see evidence supporting what you already believe. Everything else just gets filtered out.

First off, having this bias doesn't mean you're a flawed person. It's a subconscious mental shortcut—a piece of our brain's original programming. Our minds are built for efficiency, and in a world overflowing with information, this bias helps us sort through the noise by latching onto what feels familiar.

This little shortcut runs in the background of your mind, quietly steering your decisions, from the big ones to the tiny, everyday ones. It influences which news articles you click, what you think about the latest diet craze, and even how you read the mood in a conversation with a friend. After all, being right feels good, so our brain naturally seeks out that feeling of validation.

This process is sneaky and usually happens in one of three ways. You’ve probably done all of them without a second thought.

Biased Search: You hunt for evidence that backs up your gut feeling. If you think a new gadget is great, you’ll Google "why [gadget] is the best," not "problems with [gadget]."

Biased Interpretation: You take neutral or fuzzy information and bend it to fit what you already believe. It's like seeing the facts through your own personal funhouse mirror.

Biased Recall: You have a crystal-clear memory for all the times your beliefs were proven right, but the moments you were wrong? Those memories get a bit hazy.

Confirmation bias is a widespread cognitive bias that leads individuals to search for, interpret, and recall information in ways that affirm their preexisting beliefs or values.

This tendency gets even stronger when emotions are involved. Think about politics. Confirmation bias is a huge reason why people get stuck in their own echo chambers, sharing articles that confirm their team is right and the other is wrong. It's a major driver of polarization, and you can learn more about the research on political polarization and cognitive bias to see just how deep it goes.

To really get what confirmation bias is, it helps to see it broken down. It’s not just one thing; it's a trio of mental habits that work together to shield our beliefs from harm.

Here’s a quick look at the three main ways this bias shows up in your day-to-day life.

| Type of Influence | How It Works | Simple Example |

|---|---|---|

| Biased Search | Actively seeking out evidence that supports your beliefs. | Only searching for "benefits of coffee" and ignoring articles about its potential downsides. |

| Biased Interpretation | Interpreting neutral or ambiguous facts to confirm your ideas. | Seeing a friend's short text message and assuming they're angry because you already suspected it. |

| Biased Memory | Selectively remembering information that reinforces your perspective. | Recalling all the times your favorite sports team won but forgetting their losses. |

At the end of the day, just knowing this bias exists is the most important step. Once you see it, you can start to spot its subtle pull on your own thinking, which opens the door to thinking more clearly and making better-informed choices.

To really get what confirmation bias is, you first have to understand your brain’s primary mission: to be as efficient as possible. While your brain is a powerhouse, it’s also fundamentally lazy. It's designed to take mental shortcuts, known as heuristics, rather than engaging in slow, deliberate analysis that burns a ton of energy. Confirmation bias is one of its go-to moves.

Think of your brain as the CEO of a company with a very tight mental energy budget. When new information comes across its desk, it can either spend a lot of resources analyzing it from every possible angle or take the cheap route by seeing if it lines up with what it already knows. Nine times out of ten, it’s going to pick the low-cost option.

But it’s not just about saving energy; it's also about feeling good. When our existing beliefs get a thumbs-up, our brain gives us a little hit of dopamine—the "feel-good" chemical. Being "right" makes us feel smart, confident, and secure in our understanding of the world. That pleasant feeling makes us want to seek it out again and again, which only digs the bias in deeper over time.

Confirmation bias isn't just one thing; it’s more like a three-part system that runs silently in the background. It quietly shapes what information we look for, how we understand it, and even what we remember. Getting a handle on these three pieces is the first step to catching yourself in the act.

The Biased Search: This is the most obvious part of the process. It's when you actively hunt for proof that supports your gut feeling while ignoring anything that might poke holes in it. If you’re convinced a new diet is the next big thing, you'll google "Mediterranean diet success stories," not "problems with the Mediterranean diet."

Biased Interpretation: This is where things get tricky. Even when information is neutral or a bit fuzzy, your brain will bend it to fit what you already believe. Think about a political debate where a candidate gives a vague answer. To their supporters, it’s a smart, nuanced response. To their opponents, it’s a classic case of dodging the question. The "facts" were identical, but the interpretations couldn't be more different.

Biased Recall: Our memory isn't like a video camera that records everything perfectly. It’s more like a highlight reel curated by a very biased director. That director is confirmation bias, and it makes sure we vividly remember the one time our horoscope was eerily accurate but conveniently forget the 99% of times it was total nonsense.

At its heart, confirmation bias is your brain's personal hype man, constantly whispering that your version of reality is the right one. It filters, tweaks, and edits the world to fit the story you're already telling yourself.

These three pillars don't work in isolation—they create a powerful feedback loop. Your biased search feeds you agreeable evidence, your biased interpretation makes that evidence feel solid and compelling, and your biased memory ensures you only recall the "wins" while forgetting all the "misses."

Let’s walk through a quick example. A manager hires someone they believe is going to be a rockstar employee.

This self-reinforcing cycle makes it incredibly hard to change our minds, even when the facts are staring us in the face. Our brain's shortcuts have built a comfortable little reality for us, and challenging it means going up against our own internal reward system.

Confirmation bias isn't some abstract idea from a psychology textbook. It's a powerful force that quietly nudges countless decisions you make every single day. Once you learn to spot it, you’ll see it everywhere—from your social media feed to your grocery cart.

Think of it as an invisible hand guiding you toward information that just feels right because it matches what you already believe. It works by making you favor any evidence that supports your existing opinions, whether they’re about politics, health, or even your favorite sports team.

Your brain essentially acts like a superfan. It eagerly collects every article, stat, and comment that proves your team is the best, while conveniently ignoring—or finding excuses for—anything that suggests otherwise.

One of the most powerful examples of confirmation bias today is probably sitting in your pocket right now. Social media platforms are engineered to show you content that grabs your attention, and nothing is more engaging than information that confirms our own worldview.

If you lean politically to the left, your feed likely serves up a steady diet of posts that praise the left and critique the right. The opposite is true if you lean right. Over time, the algorithms learn what you like and build a personalized reality bubble around you, constantly feeding you "proof" that your side is the correct one.

This digital echo chamber strengthens your beliefs until they become rigid. It makes it incredibly difficult to understand opposing views because you rarely encounter them in a fair or nuanced way. This is the perfect breeding ground for confirmation bias, deepening social divides and making genuine conversation feel almost impossible.

Confirmation bias also shows up in a big way when we make decisions about our health. Let’s say you’ve decided to go all-in on the keto diet, convinced it's the ultimate path to weight loss and boundless energy.

Here’s how the bias kicks in:

This cycle reinforces your initial decision, making you almost immune to information that challenges your new lifestyle. You aren't just following a diet; you're actively filtering reality to protect your belief in it.

This mental shortcut isn't just about diet fads. Psychological experiments have repeatedly shown that people selectively remember information that confirms their self-image and dismiss contradictory feedback as simply unreliable.

This tendency can scale up from personal choices to community-wide beliefs. It’s not a new phenomenon; historical events like the Salem witch trials show how a group’s shared biases can spiral into a collective delusion. A more bizarre modern case was the 1950s Seattle windshield pitting epidemic, where residents became convinced a mysterious force was damaging their cars, a belief fueled entirely by shared confirmation bias. You can explore further how this bias shapes both personal and collective beliefs on Wikipedia.

This bias even seeps into our most personal relationships, coloring how we see the words and actions of the people we care about. If you already have a nagging feeling that a friend is upset with you, confirmation bias will go to work to prove you right.

For instance, you send them a text, and they reply with a short, one-word answer. If you were feeling good about the friendship, you might just think, "Oh, they must be busy." But because you're already suspicious, you see that same text as cold, passive-aggressive proof that they’re definitely mad.

Now you're on high alert, scanning every interaction for more clues. A delayed reply, a neutral expression, a sigh—everything becomes evidence supporting your initial fear. This can easily become a self-fulfilling prophecy, where your biased assumptions make you act defensively, which in turn creates the very tension you were worried about in the first place.

Confirmation bias has always been part of human nature, but modern technology has put it on steroids. That natural pull we feel toward information that supports our beliefs is now supercharged by powerful algorithms designed for one thing: keeping our attention. These systems get scarily good at learning what we like, creating a personalized digital world where our views aren't just validated—they're constantly echoed back to us.

Every single click, like, and share is a signal. Search engines and social media platforms gobble up this data to curate what you see next. The result? A perfectly tailored information stream that aligns with your worldview, making it feel like your perspective isn't just one of many, but the main one.

This is what’s known as a "filter bubble" or an "echo chamber." It's a cozy digital space, but it's one where you’re rarely challenged by opposing ideas. Over time, living in this digital cocoon can make your beliefs more rigid, as you're constantly fed a diet of information telling you that you're right.

The algorithms running our online world aren't evil. Their primary job is simply to keep you engaged and on the platform longer. It just so happens that the best way to do that is to serve you content that gets an emotional reaction, and nothing feels better than the satisfaction of being proven right.

Let's say you're looking up a controversial health topic. Your search engine notices your phrasing and the first couple of links you choose. It quickly figures out where you stand and begins to shuffle the results, bumping up sources that match your viewpoint and pushing dissenting ones so far down the page you'll probably never see them.

This process is incredibly effective at keeping you clicking, but it comes with a massive problem. A 2021 study found that more than 50% of online health content hasn't been verified by experts. When you combine that with personalized algorithms feeding you exactly what you want to hear, it's easy to fall into a misinformation trap, all while feeling like you've done extensive research. You can read the full research about these filter bubbles to see just how these mechanisms work.

The real danger of the digital echo chamber is that it doesn't just give us what we want to hear—it makes us forget that other viewpoints even exist. It quietly erodes our ability to engage in civil discourse and find common ground.

This tech-fueled confirmation bias has serious consequences that ripple out far beyond our individual screens. By sorting us into like-minded digital tribes, it pours gasoline on the fire of social and political polarization. When the only voices we hear are ones that agree with us, it’s much easier to see people with different opinions as not just wrong, but foolish or even malicious.

This environment is also the perfect breeding ground for misinformation. False narratives spread like wildfire inside these echo chambers because they are tailor-made to confirm the group’s biases. With no credible counterarguments to slow them down, these stories take hold and harden into what feels like fact.

This cycle creates a ripple effect of societal problems:

To truly grasp what confirmation bias is today, we have to recognize the powerful role technology plays. Our own devices and the platforms we use have become unwitting partners in reinforcing our oldest mental shortcuts. This makes the conscious, deliberate act of seeking out different perspectives more critical than ever before.

Knowing that confirmation bias is pulling the strings is the first step. But the real change happens when you start actively pushing back. This isn't something you can just switch off; our brains are hardwired this way. Instead, you need a conscious toolkit of strategies to keep that bias in check.

Let’s be honest: this means getting comfortable with being uncomfortable. It's about deliberately looking for things that contradict what you believe, questioning your gut instincts, and accepting that even your strongest opinions might not be the whole story. The goal isn't to abandon your beliefs, but to make sure they’re built on solid rock, not sand.

Think of these strategies like exercises for your brain. The more you do them, the stronger your critical thinking gets, and the clearer and more objective your decisions will become.

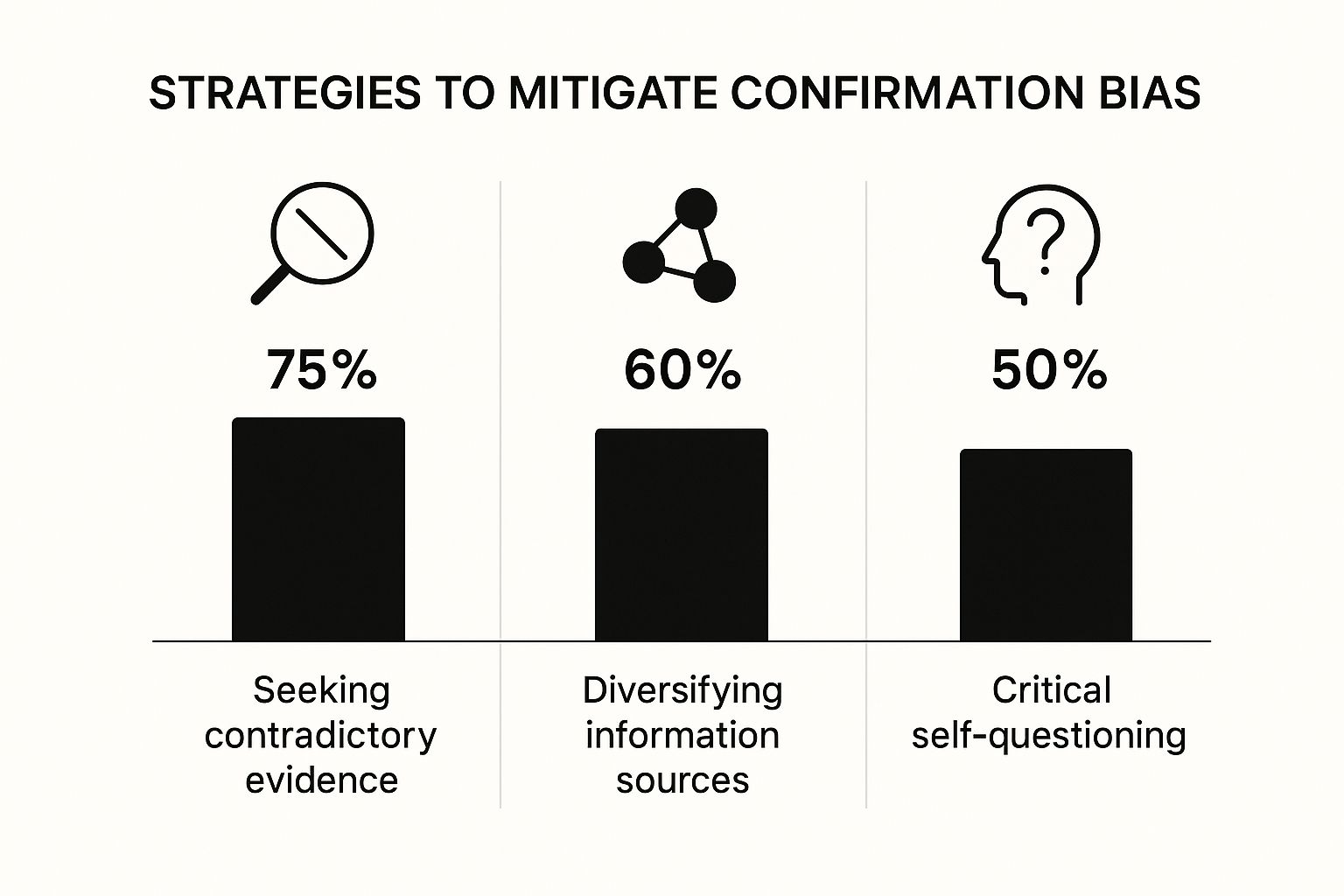

The image below breaks down some of the most common strategies people use to get a handle on their biases and see which ones they find most helpful.

It’s pretty clear from the data that one strategy stands out above the rest: actively looking for evidence that proves you wrong. This tells us that just passively seeing different opinions isn’t nearly as powerful as getting in there and actively hunting for a challenge.

One of the best ways to escape your own echo chamber is to become your own toughest critic. Before you settle on an opinion or make a big decision, force yourself to build the strongest possible argument for the opposite side.

This is more than just a quick thought experiment. You have to genuinely try to think like someone who disagrees with you. What points would they make? What data would they use? Where are the weak spots in your own argument?

The point isn't to talk yourself out of your position. It's to stress-test it. If your belief can hold up against a serious, honest challenge, you'll know it's truly solid.

For instance, say you're dead-set on your company buying a new, expensive piece of software. Before you make the pitch, take 30 minutes and list every reason why it's a terrible idea. Consider the hidden implementation costs, the pushback you might get from the team, and whether a cheaper tool could handle 80% of the work. This simple exercise forces you to see beyond your own initial excitement.

Confirmation bias loves a monotonous environment. To starve it, you have to deliberately mix up the information you consume. This means making a real effort to listen to and read things from sources that don't just tell you what you already believe.

It’s easier than you think. Try a few of these:

Doing this doesn't just show you different facts; it shows you entirely different ways of looking at the world. You start to grasp the logic behind opposing views, which is the perfect antidote to the "us vs. them" mindset that confirmation bias feeds on.

Maybe the most important strategy of all is to cultivate intellectual humility—the simple, powerful idea that you might be wrong. It’s the polar opposite of dogmatism. It’s knowing that your knowledge has limits and that your beliefs should change when you encounter better evidence.

People who practice intellectual humility don't see changing their minds as a weakness. They see it as a sign of strength, a genuine commitment to getting closer to the truth.

This shift in mindset makes every other strategy ten times easier. Once you accept that you could be mistaken, you naturally become more curious about what you might be missing. You start asking better questions, like, "What if I'm wrong about this?" or "What's the strongest argument against my position?" This humble starting point opens the door to smarter, less biased thinking in everything you do.

Recognizing our biased thought patterns is one thing, but actively correcting them in the moment is where the magic happens. The table below outlines some common mental shortcuts we take and the specific, corrective actions you can implement to build better thinking habits.

| Biased Habit | Corrective Strategy | Why It Works |

|---|---|---|

| Only seeking agreeable sources | Deliberately read or listen to opposing viewpoints before making a final decision. | It forces exposure to disconfirming evidence, breaking the echo-chamber effect and providing a more balanced perspective. |

| Interpreting ambiguity in your favor | Ask yourself: "How would someone who disagrees with me interpret this same information?" | This creates psychological distance, allowing you to see the data from a more neutral standpoint instead of through your biased lens. |

| Focusing on wins, ignoring losses | Keep a "decision journal" to track not just the outcome, but your reasoning at the time. Review it regularly. | It provides an objective record of your thought process and outcomes, making it harder to misremember your failures as near-successes. |

| Surrounding yourself with "yes-men" | Appoint someone in a meeting to officially play the "devil's advocate" and challenge the group's consensus. | It legitimizes dissent and makes it psychologically safer for others (and yourself) to voice concerns without fear of social penalty. |

By turning these strategies into regular habits, you're not just fighting a single bias; you're building a more robust and reliable way of thinking. It's about moving from automatic, reflexive judgments to more deliberate and well-rounded conclusions.

As you dig into what confirmation bias is, a few questions tend to pop up again and again. Getting clear, human-friendly answers to these can really solidify your understanding and make it easier to see this mental quirk in your daily life.

Think of this section as a quick-reference guide. We’ll untangle some common confusions and use simple analogies to help you feel more confident about what you've learned. By the end, you'll have a much sharper picture of this fundamental part of how we think.

It’s easy to paint confirmation bias as the bad guy of rational thinking, but the reality is a little more nuanced. In many everyday scenarios, it’s not just harmless—it's actually a useful mental shortcut that helps us get through the day without being paralyzed by choices.

For example, when you’re doing your weekly grocery shopping, you probably reach for brands you already trust. You don’t stop to conduct a fresh, deep-dive analysis every time you need milk or soap. That’s confirmation bias at work, letting you lean on your established belief ("this brand is good") to make a quick, low-stakes decision. It saves you a ton of time and brainpower.

The danger isn't the shortcut itself, but where you let it take you. The goal is to recognize when confirmation bias is a helpful time-saver versus a harmful blind spot that could lead to poor high-stakes decisions.

The trouble starts when we apply that same automatic process to big, important decisions about our health, career, or finances. Ignoring evidence that challenges your beliefs about a medical diagnosis or an investment can have devastating results. The key is to build the self-awareness to know when to trust the shortcut and when to slow down and engage in more deliberate, critical thinking.

It’s easy to get lost in the jargon of cognitive biases, but a simple way to think about it is that confirmation bias is the "master bias." It's the underlying engine that powers many other, more specific biases, like stereotyping or the halo effect.

Let's use an analogy. Imagine confirmation bias is your brain's personal search engine. Its main job is to find and highlight results that perfectly match whatever you've already typed in—your existing belief.

So, while a stereotype is a specific generalization, confirmation bias is the broader, more fundamental force that cements that stereotype (and any other belief) in place. It’s the invisible hand that gathers the "proof" to protect our most cherished ideas from being challenged.

In a word? No. Trying to eliminate confirmation bias entirely would be like trying to tell your brain to stop looking for patterns. It's a core, hardwired feature of how our minds operate efficiently, not a software bug you can just patch or delete.

The goal isn't elimination—that’s a recipe for frustration. The goal is awareness and management. The best strategy is to accept that this bias lives in all of us (yes, even you!) and then build conscious habits to counteract its worst effects.

Think of it like learning to be a defensive driver. You can't remove every reckless driver or road hazard out there. But you can learn skills that dramatically lower your risk of an accident: checking your blind spots, keeping a safe distance, and staying alert.

You can apply the same "mental defensive driving" to your own thinking:

It’s an ongoing practice, not a one-and-done fix. But by consistently using these strategies, you become a much safer, more thoughtful navigator of information, capable of making better decisions.

At Factiii, we believe that the best defense against cognitive biases like confirmation bias is a commitment to verifiable, transparent information. Our community-driven platform empowers you to challenge assumptions and build a more accurate understanding of the world by engaging with data-backed facts. Join a global community of researchers, journalists, and curious minds dedicated to separating fact from fiction. Start exploring verifiable facts on Factiii today.