When you first land on a page, before you even read the first sentence, you can learn a lot. Think of it as a quick "vibe check" for credibility. A few seconds is all it takes to spot the tell-tale signs of a trustworthy source—or the red flags of a questionable one.

I do this instinctively now, and it saves me a ton of time. This isn't about deep analysis; it's about building a mental filter that immediately flags content that probably isn't worth your time. With a little practice, you'll get a gut feeling for what's solid and what's not, just from a quick glance.

This first impression is surprisingly powerful. You're just looking for a few simple things that separate carefully crafted information from opinions dressed up as facts.

When I scan a new source, my eyes immediately jump to three specific areas. They're easy to find and tell you a lot about the effort and integrity behind the content.

Authorship and Accountability: Is there a person behind the words? I always look for an author’s name and, ideally, their credentials or a short bio. An article written by "Admin" or with no author at all is a major red flag. Accountability matters.

Professional Design and Usability: This might sound superficial, but it’s a good clue. A site that's cluttered with pop-up ads, riddled with typos, and looks like it was designed in 1998 probably doesn't have a serious editorial process. Reputable publishers invest in a clean, professional presentation.

Cited Evidence: Do they show their work? I look for links within the text pointing to original studies, reports, or other credible sources. If an article makes big claims with zero links or references to back them up, I assume it's just opinion.

Here’s a real-world example: Pull up a research article on a university website and then open a random opinion blog. The university page will have named authors, their affiliations, and a long list of citations. The blog? Maybe a first name, no credentials, and zero evidence. That contrast says everything.

Alright, so you've given the source a quick once-over. The next logical step is to figure out who's actually behind the words. Trustworthy information doesn't just materialize out of thin air; it’s created by real people and organizations, and they all have a reputation—good, bad, or otherwise.

The big question is: who wrote this, and who published it? Answering this is how you separate a genuine expert from just another random person with an opinion and an internet connection. A quick search on the author's name usually tells you a lot about their background, where else they've been published, and what people in their field think of them.

An author's credibility is everything. You're essentially looking for proof that they're qualified to speak on the topic. A little digging can help you answer a few critical questions.

This isn't just my personal checklist. It's a core part of established research methods, like the SMART criteria from the University of Washington Libraries, which stresses the importance of an author's authority. Learning these standards is a key step in how to find reliable sources.

The author is only half the story. The publisher matters just as much. A publisher with a solid reputation has a lot to lose by printing junk, so they usually have strict editing and fact-checking processes in place.

Think about it: a university press or a major news organization has a formal review board. A personal blog or a site run by a niche advocacy group? Not so much.

I always make a point to check the "About Us" page on any new site I encounter. It can tell you a lot about the organization's mission, funding, and potential biases. And here's a pro tip: if a publisher regularly issues corrections when they get something wrong, that’s actually a great sign. It shows they care about getting it right.

It's easy to get swayed by a study's flashy headline or surprising conclusion. But the real story—the part that tells you if the information is trustworthy—is always in the research methodology. This is your chance to look "under the hood" and see how they actually arrived at their findings.

Think of it this way: a research study is only as strong as the methods used to conduct it. A weak design leads to questionable results. Learning to spot the difference is a core skill for anyone trying to navigate the sea of information out there.

The first thing I always look for is the sample, which is just the group of people or things that were studied. Two things matter most here: how big was the group, and how were they chosen?

For example, a study that surveyed only 10 people about a new product isn't going to tell you much about how the general public feels. The sample is just too small to be meaningful.

Just as important is how those people were selected. A Twitter poll where anyone can vote is a classic example of a weak, self-selecting sample. The results are often skewed because you're only hearing from people who are motivated enough to click a button, not a true cross-section of the population.

The gold standard in many fields is the randomized, controlled trial. In this setup, participants are assigned to different groups purely by chance. This randomization is powerful because it helps cancel out hidden biases, making the results far more reliable.

The way data is gathered makes a huge difference. Studies have shown that a probability sample—where every single person in a population has an equal chance to be included—gives you results that you can actually generalize. This is a world away from the convenience of something like an online poll, which can be riddled with bias. If you want to dive deeper, you can read more about how survey methods impact credibility.

Here’s a simple but powerful question I always ask: who paid for this research? This isn't about being cynical; it’s just smart. Understanding who funded a study is fundamental to spotting potential bias.

If you see a study raving about the benefits of a new artificial sweetener, and it was funded by the company that manufactures it, that’s a major red flag. It doesn't automatically mean the results are false, but it does mean you should approach them with a healthy dose of skepticism.

Reputable studies will always be transparent about their funding sources. Look for a section often labeled "Conflicts of Interest" or "Funding Disclosure." If you can't find one, that's a warning sign in itself.

Numbers have a way of looking solid and undeniable, but they can be just as misleading as words if you're not careful. When you’re trying to find reliable information, it’s smart to develop a healthy sense of skepticism toward any statistic you see. Even data from a source that seems trustworthy deserves a closer look.

One of the most common traps people fall into is assuming data is current. The truth is, most statistics you come across are at least a year old. Think about it: collecting, analyzing, and publishing data takes a long time. So, a big part of checking the numbers is also checking the date to make sure they're still relevant to what you're talking about today.

I often see people pulling stats from aggregator sites like Statista. While these sites are handy for getting a quick overview, they’re almost never the original source of the data. Use them as a starting point, but don't stop there. Your real goal is to trace that number all the way back to the original study or report.

Why is this so important? Because it lets you check two critical things:

As the National University Library points out, you have to go to the source to be sure. Getting comfortable with evaluating statistics this way will make your work much stronger.

My two cents: A statistic without a clear link to its original source is just a random number. Don't ever take data at face value—always ask where it came from and how it was collected.

To help you get into the habit, here’s a quick checklist I use to run through the basics when I encounter a new source.

| Evaluation Criteria | What to Look For | Red Flag Example |

|---|---|---|

| Author Expertise | Is the author a recognized expert in this field? Do they have credentials? | A blog post on medical treatments written by a marketing guru. |

| Source Reputation | Is this a well-respected publication, journal, or organization? | A "news" site known for publishing sensational or biased content. |

| Citations | Does the author link to original research or other credible sources? | Claims like "studies show..." with no link or reference to the actual study. |

| Timeliness | When was this information published or last updated? | An article about social media trends from 2015. |

| Objectivity & Bias | What is the author's goal? Is it to inform, or to sell something or persuade you? | A review of a product published on the company's own website. |

This table isn't exhaustive, but it covers the fundamentals. Running through these questions will help you spot unreliable information much more quickly.

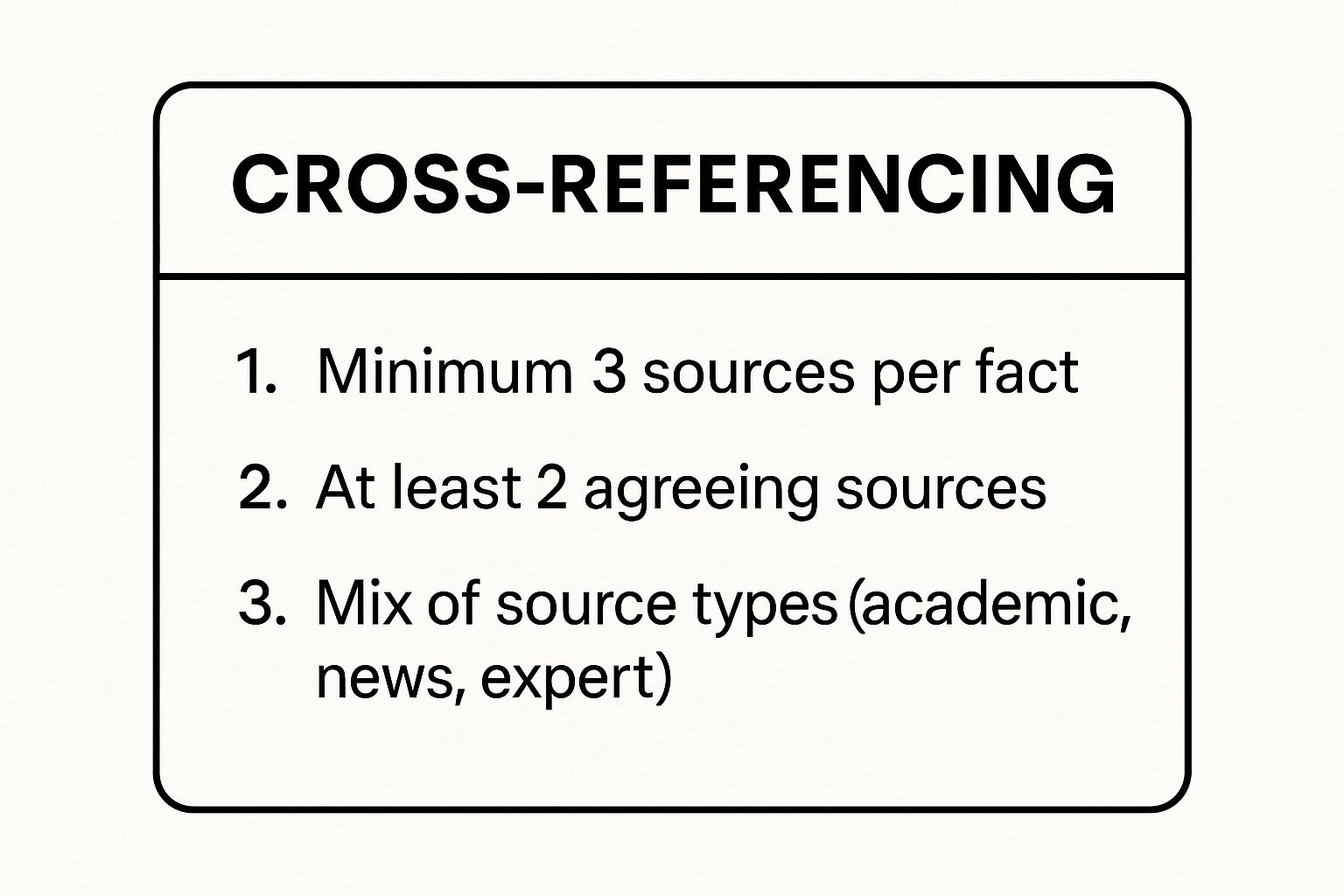

Here’s a rule I live by: never trust a single source for a critical piece of information. If a fact or number is important, I make sure to find at least three independent sources that back it up. This is called cross-referencing, and it's your best safeguard against a single source's error, outdated information, or hidden bias.

This is a great visual that breaks down the idea.

As you can see, getting confirmation from a few different types of sources—like a news article, a research paper, and a government report—builds a much more solid foundation than relying on just one.

Knowing how to check information isn't about becoming a private eye. It's about having the right digital tools ready to go and building a habit of using them. Once you get the hang of it, verification stops feeling like a chore and becomes a quick, automatic part of how you browse the web.

Think about a shocking photo that suddenly pops up in your social media feed, claiming to show a breaking news event. Your first instinct might be to share it, but the better impulse is to question it. This is where a reverse image search comes in handy.

I always keep a couple of these bookmarked. Tools like TinEye or the built-in reverse image search from Google are perfect for this exact scenario. You just upload the image, and these engines will scour the web to show you where else it has appeared and, crucially, when it was first posted.

You might quickly find that dramatic "protest photo" from today is actually from a different country and is five years old. It's a simple, powerful check that can instantly expose manipulated or out-of-context content.

Beyond just images, it's smart to have a few trusted fact-checking websites in your rotation. These are my top picks for when a specific claim, statistic, or quote seems a little off.

The real goal here is to make cross-referencing second nature. When you build these tools into your regular online routine, you create a powerful personal defense against the flood of misinformation. It’s a core principle behind platforms like , which encourages community-driven verification by transparently citing reliable sources and tools just like these.

Even when you know what to look for, some sources just don't fit neatly into the "reliable" or "unreliable" box. You're going to hit gray areas. Let's walk through a few of the most common challenges I see people grapple with.

You’ve found a fantastic statistic or a perfect quote, but there's no author's name attached. This happens all the time, especially with reports from big organizations or government websites.

When this happens, you have to shift your focus from the person to the institution.

Is the publisher a well-regarded university, a government body like the Centers for Disease Control and Prevention, or a long-standing research nonprofit? If the organization has a solid reputation to uphold, its content has almost certainly been through a tough internal review. In these cases, you can feel confident treating the institution as the author.

On the other hand, an anonymous blog post or an article on a site with an obvious axe to grind and no "About Us" page is a major red flag. If no one is willing to put their name on it, why should you trust it? Accountability is a cornerstone of credibility.

It’s definitely confusing when you find two highly qualified experts with completely opposite takes on the same subject. This is a classic stumbling block. But remember, this disagreement doesn't automatically mean one of them is a fraud. Progress in science and knowledge often comes from rigorous debate.

Your role here is to step back and analyze the debate itself.

The point isn't to pick a winner and a loser. It’s to grasp the nuances of the argument and the quality of the evidence on each side. The real truth is often found in that complex middle ground, not in a simple, black-and-white answer.

Every news outlet has a perspective; that's just human nature. The key is to distinguish between a professional journalistic viewpoint and manipulative bias.

For a quick check, pay close attention to the language. Are they using emotionally loaded words like "shocking," "nightmare," or "miraculous"? That’s a good sign the author is trying to make you feel a certain way, not just inform you.

Another dead giveaway is whether the story feels one-sided. Does it only include quotes and facts that support a single narrative? A balanced piece of journalism will almost always include voices from different sides of an issue. If it reads more like a prosecution's closing argument than a fair report, you should be skeptical.

At Factiii, we’re passionate about helping people build these critical thinking skills. Our community platform is designed to be a training ground where you can practice evaluating sources, discussing evidence, and collaborating with others to get to the bottom of things. Come join a community that's serious about separating fact from fiction.