Mastering Evaluating Research Papers: A Complete Guide

You don't need to spend hours on a paper just to find out it’s not worth your time. A quick, five-minute scan is often all it takes to decide if a piece of research deserves a closer look. This initial once-over isn't about judging the science itself, but about spotting obvious red flags that can save you a lot of frustration later.

Think of it as a triage for your reading list.

### Your First Pass: A Quick Credibility Check

Before you even think about the methodology or results, start with the basics. Who wrote this, and where do they work? A quick search on the authors can tell you if they're known in their field and affiliated with a respected university or research institution. While great work can come from anywhere, a lack of a credible affiliation can sometimes be a warning sign.

Next, look at where the paper was published. Is the journal a reputable name in its field? Does it have a clear, rigorous peer-review process? Be skeptical of journals you've never heard of, especially those that seem to publish a high volume of articles without much oversight.

The publication date is just as crucial. Research moves quickly, and an older paper might be completely out of date. You always want to place the findings in their historical context.

A good example of this is seen in a bibliometric analysis of End-of-Life Care research, which found an exponential growth in publications over time. The study noted that the top **20** journals in this field were set apart by high citation rates and impact factors, highlighting how a journal's reputation is a solid proxy for its influence and credibility. You can see a breakdown of how researchers track these trends in this [comprehensive study on End-of-Life Care](https://pmc.ncbi.nlm.nih.gov/articles/PMC9517393/).

### The Quick Credibility Assessment Checklist

Before you commit to a deep dive, run through this quick checklist. It helps you systematically spot the most common red flags at a glance.

| Checklist Item | What to Look For | Why It Matters |

| :--- | :--- | :--- |

| **Author Affiliation** | Are the authors from a reputable university, hospital, or research center? | Established institutions typically have higher research standards and oversight. |

| **Journal Reputation** | Is the journal well-known and peer-reviewed in its field? Look for its impact factor. | Reputable journals have a rigorous vetting process, filtering out weak research. |

| **Publication Date** | Is the research recent enough to be relevant to your topic? | Science evolves. Old data may be outdated or superseded by new findings. |

| **Abstract Clarity** | Does the abstract clearly state the research question, methods, and key findings? | A confusing or vague abstract often signals a poorly structured or unfocused paper. |

| **Conflict of Interest** | Is there a disclosure statement? Does the funding source present a potential bias? | Funding can influence research outcomes, so it's important to be aware of potential conflicts. |

This checklist isn't a final judgment, but if you're ticking "no" on several of these points, you might want to move on to a different paper.

### Clarify The Core Objectives

Finally, spend a minute on the abstract. This is your window into the entire paper. It should clearly and concisely lay out the research question, the methods used, the most important findings, and the conclusion.

> **Pro Tip:** Watch out for abstracts that make huge, confident claims without backing them up. Good research is usually presented with a healthy dose of caution and precision. If it sounds too good to be true, it might be.

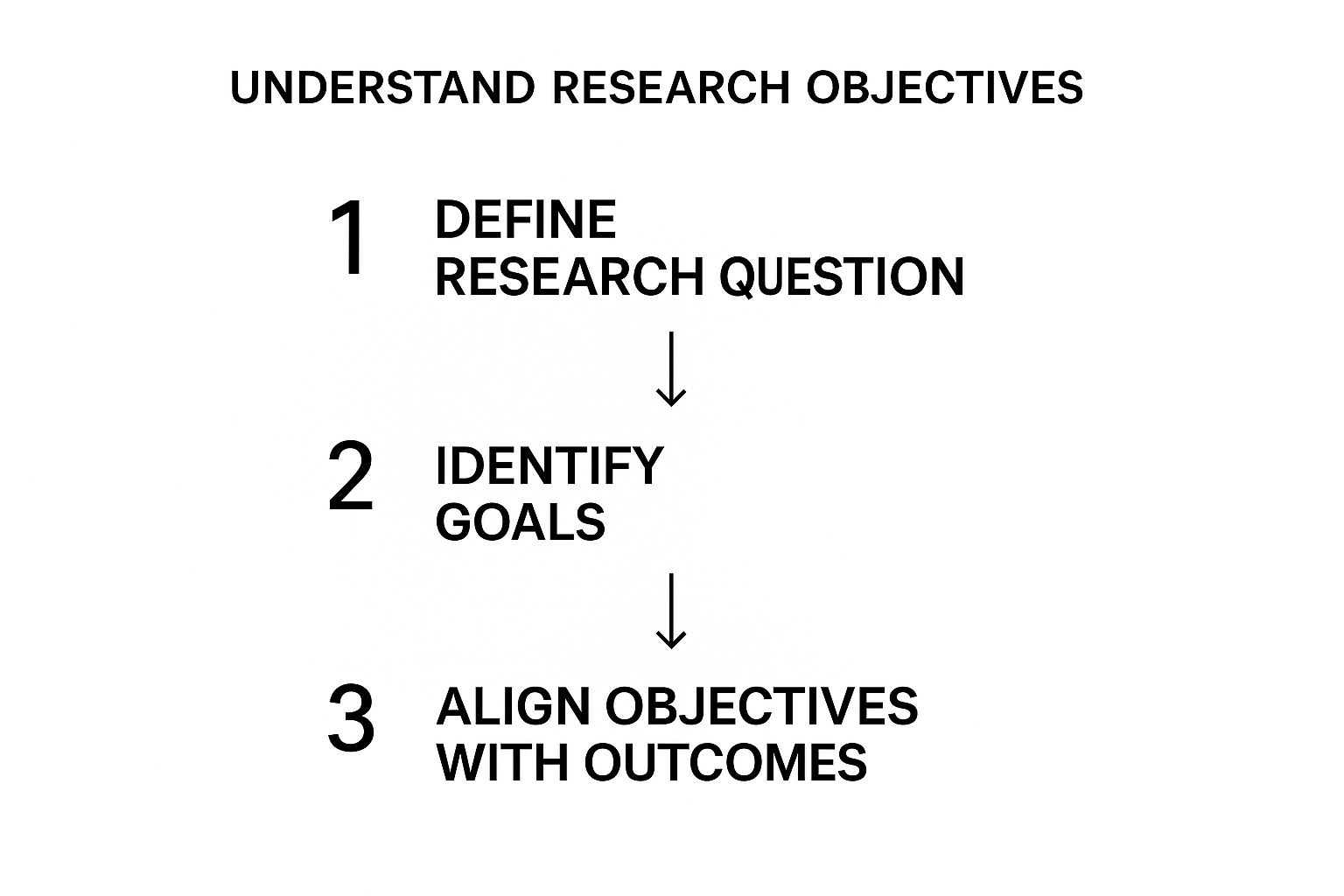

A well-structured paper will have a clear and logical flow from its central question to its objectives.

As this image shows, a solid research question is the bedrock. It leads to specific goals and measurable objectives. If you can't easily trace this path in the abstract or introduction, it’s a good sign the paper might lack the focus and structure needed for credible research. Taking these few minutes upfront ensures the time you do invest is spent on papers that are truly worth it.

## How to Take Apart the Research Methodology

The methodology section is the engine room of any research paper. It’s where the authors have to show their work and prove their results are built on a solid foundation. This part of the paper explains *how* they reached their conclusions, and believe me, a shaky method can make the entire study crumble.

Your job here is to look past the dense, technical jargon and really ask: Was their approach sound? Was it the right one for the question they were asking? A paper might boast about using a "randomized controlled trial," but the real test is how well they actually pulled it off.

### Check Out the Sample and Participants

First things first, look at who—or what—they studied. Who were the participants? How did the researchers pick them? The group they study (the sample) needs to be a good stand-in for the larger population they want to talk about.

For example, if a study on a new coding tool only includes junior developers from one startup in San Francisco, the authors can't credibly claim their findings apply to all software developers worldwide. That’s a textbook case of **selection bias**, where the sample is lopsided from the get-go, poisoning the results.

A solid methodology will give you a clear demographic breakdown and a logical reason for how they chose their sample. A weak one will be fuzzy on the details or, worse, use a sample that was just easy to get, not the right one for the job.

### Dig Into the Data Collection Tools

Next, turn your attention to how the data was actually gathered. You need to know if the tools they used were both reliable and valid. **Reliability** just means the tool gives consistent results if you use it again. **Validity** means the tool actually measures what it’s supposed to measure.

Here’s what to look for:

* **Surveys and Questionnaires:** Were the questions written in a neutral way? It's surprisingly easy to write a leading question that nudges people toward a certain answer. Good papers will mention that their questionnaire was pre-tested or uses well-established, validated scales.

* **Experiments:** Did they use a control group? This is critical. A control group gives you a baseline to compare your results against. Without one, you have no way of knowing if the changes you saw were from your experiment or just random chance.

* **Observations:** If people were observing behavior, were they all trained to use the same checklist? This is key to cutting down on subjective guesswork and making sure everyone is recording things the same way.

> Here's a common red flag I always look for: a custom-built, unvalidated questionnaire. If the authors made their own survey from scratch, they absolutely must prove that it works as intended. If they don't, the data they collected with it is pretty much worthless.

### Hunt for Biases and Other Errors

Even the most carefully planned study can have biases sneak in. When you're evaluating research, developing an eye for these subtle problems is what separates a quick skim from a truly critical analysis.

Think about a medical study testing a new pill. If the researchers know which patients are getting the real drug and which are getting a sugar pill (a placebo), they might unconsciously treat the groups differently. That's why **double-blind studies**—where neither the researchers nor the patients know who is getting what—are the gold standard.

Another troublemaker is **measurement error**. This can be as simple as a poorly calibrated instrument or a survey question that everyone seems to misunderstand. A well-written paper won't hide from these issues. Instead, it will openly discuss potential sources of error and explain what they did to control for them. The more honest and thorough they are about these challenges, the more you can trust their conclusions.

## Analyzing Data and Interpreting the Results

So, you’ve confirmed the study's methods are sound. Great. Now comes the real detective work: digging into the data to see if the authors' claims actually hold up.

This is where you have to separate the raw evidence from the narrative the paper is weaving. It’s easy to just glance at a few charts and nod along, but a truly critical reader forms their own conclusions directly from the data presented.

You need to ask tough questions about every single table, graph, and statistical output. Does a chart really say what the text claims it does? I’ve seen my fair share of graphs where a tweaked axis makes a tiny difference look like a groundbreaking discovery.

### Scrutinizing the Statistical Evidence

Don't let the statistical jargon throw you off. You don't need to be a statistician, but you do need to understand the basic logic. The main thing to ask is: did they use the right tool for the job?

For instance, if a study has five different groups but the researchers used a statistical test designed for only two, that's a major red flag. It's a fundamental mismatch that can completely invalidate the results. A well-written paper won't just name the tests; it will briefly explain *why* they were chosen.

> A common trap I see people fall into is confusing **statistical significance** with **practical significance**. A result might be statistically "real" (unlikely to be a fluke), but the effect could be so tiny it has zero real-world meaning. Always hunt for the **effect size**—that’s what tells you how much the finding actually matters.

### Spotting Inconsistencies and Questioning the Narrative

Now, actively hunt for disconnects between the data and the story. This is probably the most important skill you can develop for evaluating research.

Here are a few things I always check:

* **Do the Numbers Add Up?** If the text mentions percentages, pull out your calculator and check them against the raw numbers in the tables. You'd be surprised how often you find small (or large) errors.

* **What Do the Error Bars Say?** On a graph, wide error bars mean lots of uncertainty. Even if the average points look far apart, overlapping error bars might suggest the difference isn't as solid as it seems.

* **Correlation isn't Causation:** This is an old one, but it’s a classic for a reason. Authors might hint that A caused B, when the data only shows that A and B are connected. Never assume causality unless the study was specifically designed to prove it (like a randomized controlled trial).

Researchers are also getting smarter about measuring impact beyond just counting citations. A global study on occupational health, for example, used tools like [SciVal](https://www.elsevier.com/products/scival) to look at metrics like the **Field Weighted Citation Impact (FWCI)**. An FWCI score over **1** means a paper is getting more attention than average for its specific field. It's a much more balanced way to compare influence. You can get a better sense of these data-driven approaches in this [research impact analysis](https://pmc.ncbi.nlm.nih.gov/articles/PMC9791871/).

By putting the results under this kind of microscope, you stop being a passive consumer of information. You're actively engaging with the research, questioning it, and building an informed opinion. That’s the real goal here—to make sure the conclusions you walk away with are built on a foundation of solid evidence.

## What Are They *Really* Saying in the Discussion and Conclusion?

You've picked apart the methods and stared at the results. Now comes the part where the authors tell you what they think it all means. The discussion and conclusion are their chance to connect the dots, but it's also where a solid study can get derailed by overzealous interpretation. Think of yourself as a juror, weighing their final arguments against the evidence they've shown you.

A good discussion isn't just a rehash of the results. It should wrestle with the findings and place them in the bigger picture. Does this research shake up an old theory or does it add another brick to a well-established wall? How does it stack up against what others have found? This is where a paper stops being a simple data report and starts offering real insight.

### Look for Honest Limitations

I’ll let you in on a little secret: no study is perfect. Not a single one. The best researchers are keenly aware of this. In fact, one of the clearest signs of a credible paper is a section that openly discusses the study's limitations. When authors are upfront about potential weaknesses, it signals that they have a firm, realistic grasp of their work.

On the flip side, be very wary of an overly confident tone that breezes past any potential flaws. If the authors don't mention limitations, it's not because their study was flawless. It’s usually because they’re either blind to them or, worse, hoping you won't notice. Both scenarios should make you skeptical.

> **My Two Cents:** I always look for this section first. The most trustworthy researchers are their own toughest critics. They'll be the first to tell you their sample size was on the small side or that their method couldn't account for a specific variable. That kind of transparency is what builds trust.

An author who just gives a quick, dismissive nod to limitations isn't earning any points. A truly thoughtful discussion, however, will not only name a limitation but also explain *how* it might have influenced the results.

### Are Their Interpretations Grounded in Reality?

This is where you really need to put your critical thinking hat on. You have to judge whether the authors' conclusions are a reasonable interpretation of their data or a wild, ambitious leap. It happens more than you'd think.

For instance, a classic mistake is confusing correlation with causation. If a study finds a link between two things—say, ice cream sales and shark attacks—do the authors responsibly point out it's just a correlation? Or do they start suggesting that ice cream makes people more appealing to sharks? The conclusions have to be supported *directly* and *conservatively* by the data they presented.

As you read, ask yourself these questions:

* **Is the claim proportional to the evidence?** A small, exploratory study shouldn't be making grand, sweeping statements about all of humanity. The claims should match the scale of the research.

* **Did they consider other explanations?** A strong discussion will explore alternative ways to interpret the data, even if the authors ultimately defend their own viewpoint.

* **Does the conclusion actually answer the original research question?** Sometimes, a paper gets sidetracked, and the final conclusion doesn't quite line up with what the study set out to discover in the first place.

By carefully working through the discussion, you can separate a paper that thoughtfully analyzes its findings from one that's just trying to sell you on its own importance. It’s the final, crucial step to understanding what a study *really* adds to the conversation.

## Assessing the Paper's True Impact and Relevance

https://www.youtube.com/embed/qZmcYRPsiIo

A research paper can tick all the right boxes—flawless methodology, perfectly analyzed data, logical conclusions—and still be completely irrelevant. Once you’ve confirmed a study is technically sound, the final and most important step is to zoom out and ask: **does this actually matter?**

This is where you stop looking at the paper itself and start looking at its life out in the wild. A paper's true value isn't just in what it says, but in the conversation it starts, the work it inspires, and the change it creates.

### Moving Beyond Simple Citation Counts

The most obvious place to start is citation metrics. Tools like [Google Scholar](https://scholar.google.com/) or Scopus can tell you how many times a paper has been cited, which at first glance seems like a great measure of its importance. But honestly, raw numbers can be deceiving.

A high citation count doesn't automatically mean high impact. A paper might be cited a lot simply because it's controversial, or even because other researchers are busy pointing out its flaws. You have to dig a little deeper.

Instead of just looking at the number, ask yourself *who* is citing the paper.

* Is it popping up in major review articles that define the current state of the field?

* Are professionals citing it in official guidelines or policy documents?

* Are the big names in the field building their own research on top of its findings?

Answering these questions gives you a much richer understanding of the paper's real influence. A single citation in a national healthcare guideline, for instance, is a far stronger signal of impact than a dozen citations in obscure journals.

### Gauging Real-World Relevance and Social Impact

At the end of the day, great research should have a connection to the real world. A huge part of your evaluation is figuring out if the research is not just scientifically solid but also socially relevant and actionable. Does it actually help anyone solve a problem?

> A paper’s ultimate test is its utility. Does it provide a new tool for practitioners, offer a fresh lens for policymakers, or lay the groundwork for a future breakthrough? If a paper is scientifically perfect but sits on a digital shelf gathering dust, its impact is minimal.

This isn't just a personal opinion; it's a core principle for major evaluation bodies. For example, an evaluation from the UNODC emphasized that expert peer-review panels are crucial for making sure research is credible. The report stressed that any evaluation has to consider a paper's potential to inform real-world policy and practice. You can see how they approach this in their full evaluation brief.

Thinking this way changes how you read research. You're no longer just a critic of scientific methods; you're an assessor of real-world value. Asking "is this useful?" is one of the most powerful questions you can bring to any paper you read.

## Common Questions About Evaluating Papers

Even with a solid framework, you're going to run into tricky situations when evaluating research. This is where you graduate from simply following a checklist to developing a real, intuitive feel for critical analysis. Let's dig into some of the most common hurdles that stump even seasoned researchers.

A classic one is what to do when you just don't agree with the author's conclusions. It’s crucial to figure out if it's just a difference of opinion or if the conclusion is genuinely disconnected from the data. If their data is solid but you interpret it differently, that's actually a great sign! It points to a complex topic ripe for debate.

But if you read the conclusion and it feels like a massive leap from the evidence they showed you, that's a serious red flag. Always circle back to the results section. Ask yourself, "Does the evidence *really* support this claim, or are they stretching things?"

### What Is the Single Most Important Part to Evaluate?

If I had to pick just one thing, it would be the **methodology**. Every time.

Think of it as the foundation of a house. If that foundation is cracked—maybe the sample size is laughably small, the data collection was biased, or they used the wrong statistical tests—then the whole structure is compromised. It doesn't matter how beautifully written the discussion is or how groundbreaking the conclusion sounds.

If the research process itself was flawed, you simply can't trust the results. That's why the methodology section deserves your most intense focus when you're trying to figure out if a paper is legit.

### Can I Trust a Paper with Few Citations?

Yes, absolutely. A low citation count isn't an automatic deal-breaker. There are plenty of good reasons why a solid paper might not have a long list of citations.

* **It’s Too New:** Truly innovative research needs time. A paper published just last month won’t have had a chance to be read, understood, and cited by other scholars yet.

* **It’s in a Niche Field:** A paper on a highly specialized topic might only be relevant to a small group of experts, which naturally means fewer citations.

* **It Presents a Novel Idea:** Sometimes, a radically new idea just takes a while for the academic community to catch up to and engage with.

> The real trick is to judge the paper on its own merits first. Dig into its methodology, the strength of its evidence, and how logical its conclusions are. Think of citation counts as a secondary clue for context, not the final word on its quality.

### How Do I Evaluate a Paper Outside My Expertise?

You don’t have to be a leading expert in a field to make a good call on a paper's quality. This is when you fall back on the universal principles of what makes research good in the first place.

You can still effectively check for key things:

* **Clarity of the Argument:** Is the research question clear and focused? Do you know exactly what they're trying to figure out?

* **Logical Flow:** Does the paper read like a coherent story, moving logically from the introduction to the conclusion?

* **Evidence-Based Conclusions:** Are the big claims they make in the conclusion directly and clearly supported by the results they presented?

* **Acknowledged Limitations:** Do the authors come clean about their study's weaknesses? Honesty here is a great sign.

By focusing on these structural and logical elements, you can get a surprisingly accurate sense of a paper's quality, even if the nitty-gritty of the subject matter is new to you.

---

At **Factiii**, we're built on the idea that transparent, verifiable information is the foundation of solid research and a healthy public conversation. Our community-driven platform gives you the tools to dissect claims, check sources, and join a global network of fact-checkers. Help us challenge misinformation and build a more accountable world. [Join the Factiii community today](https://factiii.com).