Top Data Verification Techniques to Ensure Data Accuracy

In a world saturated with information, the line between fact and fiction is increasingly blu... by @outrank | Factiii

Top Data Verification Techniques to Ensure Data Accuracy

In a world saturated with information, the line between fact and fiction is increasingly blurred. From critical business decisions and academic research to public policy and investigative journalism, the quality of our data dictates the quality of our outcomes. Raw information, no matter how plentiful, is useless without certainty. But how can we be sure the data we rely on is accurate, consistent, and trustworthy?

The answer lies in robust **data verification**. This process isn't just a technical step; it's the fundamental safeguard for credible information systems and sound decision-making. It is the crucial action of confirming that your data is correct, complete, and free from corruption. Without it, you risk building strategies, arguments, and entire systems on a faulty foundation, leading to flawed conclusions and costly mistakes.

This guide moves beyond theory to provide a comprehensive catalog of the most effective **data verification techniques** available today. We will explore eight essential methods that form the bedrock of data integrity, diving deep into their practical implementation, real-world use cases, and actionable tips. You will learn precisely how to apply these techniques to fortify your data against corruption, tampering, and human error.

Whether you're a developer building a resilient backend, a journalist corroborating sources, or a researcher committed to accuracy, mastering these methods is non-negotiable. This listicle is your practical roadmap to ensuring the data you use is not just present, but correct. We will cover:

* Hash Functions (Checksums)

* Digital Signatures

* Data Profiling

* Data Validation Rules

* Cross-Referencing

* Checksums and Error Detection Codes

* Statistical Analysis and Outlier Detection

* Blockchain-Based Verification

## 1. Hash Functions (Checksums)

Hash functions, often called checksums, are one of the most reliable data verification techniques available. They function as mathematical algorithms that take an input of any size, like a file or a message, and produce a fixed-length string of characters. This output, known as a hash value or a digital fingerprint, is unique to the original data.

The core principle is simple: if the data changes, even by a single bit, the resulting hash value will change dramatically. This makes hashes perfect for verifying data integrity. You can calculate the hash of a file before sending it, and the recipient can calculate the hash of the file they receive. If the two hashes match, you can be certain the data has not been altered or corrupted during transmission.

### How Hash Functions Work

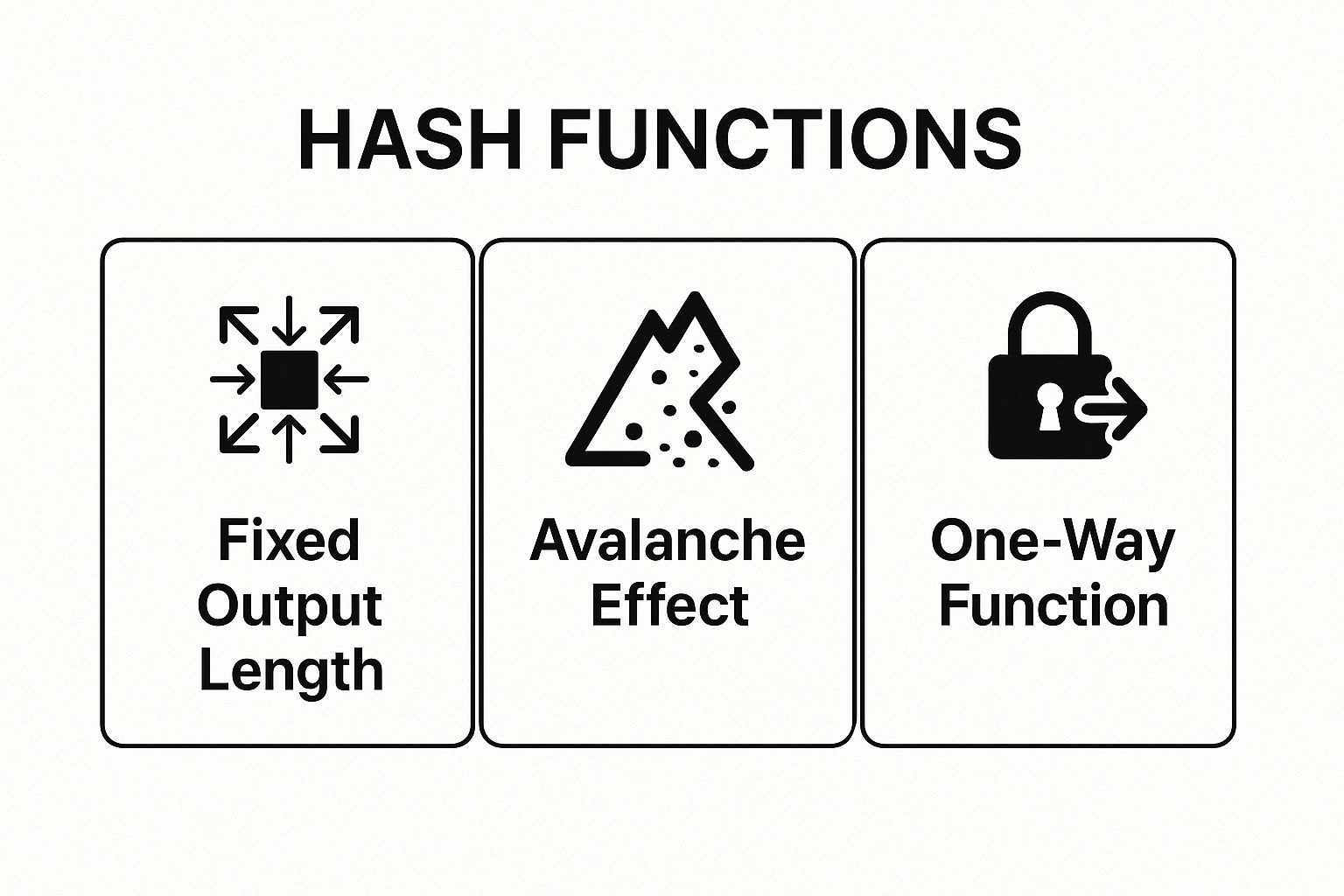

Imagine feeding a document into a highly complex blender that always produces a small, unique smoothie. No matter how large the document, the smoothie is always the same size. This "smoothie" is the hash value. The slightest change to the document's ingredients results in a completely different smoothie. This sensitivity to change, known as the **avalanche effect**, is a key feature of secure hashing. Another critical aspect is that they are **one-way functions**, meaning it's practically impossible to reverse-engineer the original data from its hash value.

This infographic summarizes the three core properties that make hash functions a powerful data verification technique.

These three characteristics work together to ensure that a hash value is a reliable and secure fingerprint for its corresponding data.

### Use Cases and Implementation Tips

Hash functions are used everywhere, from securing software downloads to underpinning blockchain technology.

* **Software Downloads:** Reputable software sites often provide an MD5 or SHA-256 hash for their files. Users can calculate the hash of their downloaded file to confirm it hasn't been tampered with.

* **Version Control:** Systems like Git use SHA-1 hashes to identify each commit, ensuring the integrity of the code history.

* **Database Integrity:** Administrators run hashes on database backups and compare them to the original to detect any data corruption.

> **Pro Tip:** Always use modern, secure hashing algorithms. While MD5 and SHA-1 were once popular, they are now considered deprecated due to known vulnerabilities. Opt for **SHA-256** or **SHA-3** for any new implementation to ensure robust security and data verification.

For a deeper dive into how hashing works, this video offers a clear visual explanation.

## 2. Digital Signatures

Digital signatures are a powerful cryptographic method that provides a higher level of assurance than many other data verification techniques. They use a system called public-key cryptography to bind a person’s identity to a piece of digital information. This not only verifies that the data is unaltered but also confirms who it came from and proves that the sender cannot deny having sent it.

The core principle involves a pair of keys: a private key, which is kept secret by the sender, and a public key, which is shared openly. The sender uses their private key to create a unique "signature" for the data. Anyone with the sender's public key can then use it to verify that the signature is authentic and that the data has not been changed since it was signed.

### How Digital Signatures Work

A digital signature is created by first generating a hash of the data (similar to the checksum method) and then encrypting that hash with the sender's private key. This encrypted hash is the digital signature, which is attached to the data. The recipient decrypts the signature using the sender's public key to reveal the original hash. They then independently calculate the hash of the received data. If the two hashes match, the verification is successful.

This process provides three crucial guarantees. **Authentication** confirms the sender's identity, **integrity** ensures the data is unchanged, and **non-repudiation** prevents the sender from later denying their involvement. This robust framework makes digital signatures a cornerstone of secure digital communication and transactions.

### Use Cases and Implementation Tips

Digital signatures are essential in scenarios requiring legal or financial validity, as well as high-security software distribution.

* **Document Signing:** Adobe Acrobat uses digital signatures to create legally binding signed PDFs, providing a secure alternative to physical paperwork.

* **Software Distribution:** Microsoft Authenticode signs software executables, allowing users to verify that the software is from a legitimate publisher and has not been infected with malware.

* **Secure Communications:** Email protocols like S/MIME and PGP use digital signatures to verify the sender's identity and ensure message integrity.

* **Website Authentication:** SSL/TLS certificates, which enable HTTPS, use digital signatures to authenticate a website's server to your browser.

> **Pro Tip:** For maximum security, always use established standards like **RSA-2048** or **ECDSA-256**. Implement proper key management, including regular key rotation and expiration policies, and verify the full certificate chain and revocation status before trusting a signature.

## 3. Data Profiling

Data profiling is the process of examining, analyzing, and summarizing datasets to gain a deep understanding of their content, structure, and quality. Think of it as creating a detailed report card for your data. This technique helps identify anomalies, inconsistencies, and other quality issues before they can corrupt downstream processes like analytics or reporting.

The core principle involves running systematic analyses on data sources to collect statistics and metadata. This reveals the actual state of the data, rather than relying on assumptions about what it *should* contain. By understanding its characteristics, patterns, and relationships, you can validate whether the data conforms to established standards and business rules, making it a foundational data verification technique.

### How Data Profiling Works

Data profiling functions like a thorough diagnostic check-up for your datasets. It scans through columns and tables to uncover key attributes. This process typically involves three main types of analysis: **column profiling** to check the frequency and distribution of values within a column, **cross-column profiling** to analyze dependencies and relationships between columns, and **cross-table profiling** to examine relationships across different tables.

This methodical examination uncovers issues like null values, incorrect data types, or values that fall outside expected ranges. It validates the data's "health" by comparing its statistical summary against predefined business rules and metadata. For instance, profiling a "State" column might reveal entries like "California" and "CA," highlighting an inconsistency that needs to be standardized.

### Use Cases and Implementation Tips

Data profiling is crucial for data governance, data migration, and business intelligence initiatives where data quality is paramount. It is widely used across various industries to ensure data is fit for purpose.

* **Financial Services:** Banks profile customer data to ensure it meets strict regulatory and compliance standards like Know Your Customer (KYC).

* **Healthcare:** Hospitals and clinics analyze patient records for completeness and accuracy, which is critical for patient safety and billing.

* **Retail:** E-commerce companies profile product catalogs to ensure price, stock, and attribute consistency across all sales channels.

* **Data Migration:** Before moving data to a new system, profiling helps identify and fix issues that could cause the migration to fail.

> **Pro Tip:** Start by profiling your most critical data elements, the ones that directly impact key business decisions. Establish clear data quality metrics and acceptable thresholds, then automate the profiling process within your data pipelines to continuously monitor quality and catch issues early.

For more information on the tools that pioneered this space, you can explore platforms like [Informatica](https://www.informatica.com/products/data-quality.html) and Talend Data Quality.

## 4. Data Validation Rules

Data validation rules are predefined constraints and business logic that incoming data must meet to be considered correct and acceptable. This data verification technique acts as a gatekeeper, checking information against specific criteria such as format, range, type, or length to enforce data quality and consistency right at the source.

The core principle is to prevent "bad data" from ever entering a system. By setting up rules that data must pass before it is saved or processed, organizations can maintain high levels of accuracy and integrity. For example, a rule might ensure a phone number field only contains numbers and is a specific length, or that an order quantity is a positive integer.

### How Data Validation Rules Work

Think of data validation rules as the bouncer at an exclusive club. Each person (data entry) must meet a specific dress code (the rules) to get in. One rule might check for a valid ID (correct data type), another might enforce a specific dress style (correct format), and a third might ensure the person is on the guest list (valid value within a set). If any rule is broken, entry is denied, and the person is told why.

This process relies on **predefined logic** that is applied automatically. This logic checks whether the data is reasonable, complete, and formatted correctly. A key aspect is providing immediate and clear **user feedback**. When a rule fails, the system should generate an error message that explains what is wrong and how to fix it, guiding the user to correct the entry.

These rules are fundamental to building reliable and trustworthy information systems.

### Use Cases and Implementation Tips

Data validation rules are a cornerstone of almost every modern application, from simple contact forms to complex financial systems. They are one of the most essential data verification techniques for maintaining data quality.

* **E-commerce Platforms:** Systems like Salesforce use robust validation rules to check customer addresses, ensure payment information is formatted correctly, and verify that product SKUs exist before an order is processed.

* **Financial Systems:** Banking applications and systems built on Oracle or SQL Server use constraints to check that transaction amounts are positive, account numbers match a valid format, and all required fields for a wire transfer are completed.

* **Healthcare Applications:** Electronic Health Record (EHR) systems validate patient demographics, medical codes (like ICD-10), and insurance information to prevent critical billing and treatment errors.

> **Pro Tip:** Implement validation at multiple layers. Use client-side validation (in the browser) for immediate user feedback and a better user experience, but always enforce the same rules on the server-side. Server-side validation is crucial as it acts as the final authority, preventing malicious or accidental bad data from bypassing client-side checks.

## 5. Cross-Referencing

Cross-referencing is a powerful data verification technique that involves comparing information from multiple, independent sources to confirm its accuracy and consistency. By checking a piece of data against other reliable datasets, you can identify discrepancies, fill in gaps, and build a more complete and trustworthy picture. This method is foundational to ensuring data quality in complex systems.

The core principle is validation through corroboration. If two or more separate sources report the same information, confidence in that data's validity increases significantly. Conversely, if the sources conflict, it signals a potential error that requires further investigation. This systematic comparison is essential for detecting everything from simple typos to sophisticated fraud.

### How Cross-Referencing Works

Think of cross-referencing as detective work for your data. A detective wouldn't rely on a single witness's testimony; they would seek out multiple perspectives to build a solid case. Similarly, cross-referencing involves taking a data point from one system and checking it against records in another. The process relies on identifying **common identifiers** (like a name, ID number, or address) to link records across disparate datasets.

For this technique to be effective, you must have access to at least two independent and reliable data sources. The process then involves **matching** records based on predefined criteria. A successful match validates the data, while a mismatch or a failed match highlights a potential integrity issue that needs resolution. This makes cross-referencing a crucial technique for maintaining high data quality standards.

### Use Cases and Implementation Tips

Cross-referencing is a cornerstone of data verification in industries where accuracy is non-negotiable, from finance to healthcare.

* **Financial Services:** Credit reporting agencies like Experian constantly cross-reference consumer data from lenders, banks, and public records to build comprehensive credit profiles.

* **Tax Authorities:** Government bodies validate income tax returns by cross-referencing self-reported income with data submitted by employers and financial institutions.

* **Healthcare:** Hospitals and clinics match patient records across different providers to ensure a complete and accurate medical history, preventing dangerous errors.

* **E-commerce:** Online retailers cross-reference customer shipping addresses with postal service databases to validate them before dispatch, reducing delivery failures.

> **Pro Tip:** Establish clear matching rules and confidence scores before you begin. For instance, a match on name, date of birth, and social security number might be a 100% confident match, while a match on name and city alone would require manual review. This tiered approach streamlines the verification process.

## 6. Checksums and Error Detection Codes

Checksums and error detection codes are simple yet powerful data verification techniques designed to detect accidental errors in data. They work by calculating a small, fixed-size value from a block of digital data for the purpose of detecting errors that may have been introduced during its transmission or storage. This value, the checksum, is appended to the data and sent along with it.

The core principle is straightforward: the recipient recalculates the checksum from the received data and compares it to the checksum that was sent. If the two values match, the data is likely free of errors. If they do not match, it indicates that the data has been corrupted, prompting a request for retransmission or flagging the data as faulty. While similar to hash functions, checksums are generally simpler algorithms optimized for speed and error detection rather than cryptographic security.

### How Checksums and Error Detection Codes Work

Think of a checksum as a simple summary of a conversation. If you and a friend agree to meet, you might summarize the plan: "Okay, so coffee, Tuesday at 2 PM." Your friend confirms, "Coffee, Tuesday, 2 PM." This quick summary helps verify you both have the same information. A checksum does this for data, using a mathematical formula instead of words. A common example is a simple addition where you add up the numerical values of all the bytes in a file, and the final sum (or a part of it) becomes the checksum.

Another popular method is the **Cyclic Redundancy Check (CRC)**, a more robust type of error-detecting code. It treats the data block as a binary polynomial and performs a division with a predetermined generator polynomial. The remainder of this division is the CRC value. This method is particularly effective at detecting common transmission errors like burst errors, where multiple consecutive bits are flipped.

### Use Cases and Implementation Tips

Checksums and CRCs are fundamental to reliable digital communication and storage, found in countless everyday technologies.

* **Network Protocols:** The TCP/IP protocol suite uses checksums in packet headers to verify the integrity of data transmitted over the internet, ensuring emails and web pages arrive correctly.

* **Data Storage:** Hard drives and SSDs use CRC codes to detect errors in data read from individual sectors, preventing silent data corruption.

* **Error Correcting Memory:** High-reliability systems use ECC (Error-Correcting Code) memory, a more advanced form of checksum that can not only detect but also correct single-bit errors on the fly.

* **Barcodes:** Product codes like ISBN and UPC incorporate a "check digit," which is a single-digit checksum to verify the number was scanned or entered correctly.

> **Pro Tip:** Choose the right code for the job. A simple checksum is fast but may miss certain error patterns. For higher reliability in networking or storage, use a **CRC** algorithm like CRC-32. For mission-critical systems like servers, investing in **ECC memory** provides an essential layer of automated data verification and correction.

## 7. Statistical Analysis and Outlier Detection

Statistical analysis and outlier detection are powerful data verification techniques that leverage mathematical models to identify data points deviating significantly from the norm. This method moves beyond simple rule-based checks by analyzing the entire dataset to uncover anomalies, or outliers, that might signal errors, corruption, or even fraudulent activity.

The core principle involves establishing a baseline of what constitutes "normal" behavior for a dataset. Once this baseline is defined, any data point that falls outside this expected pattern is flagged for review. This approach is highly effective for large, complex datasets where manually checking every entry is impossible, allowing organizations to pinpoint potential issues with high precision.

### How Statistical Analysis and Outlier Detection Work

Imagine monitoring the daily temperature of a city. Over time, you establish a normal range, say 50-70°F for a particular season. If one day the reading suddenly jumps to 110°F, that's an outlier. Statistical models do this on a much larger and more complex scale. They use methods like **standard deviation**, **Z-scores**, or **interquartile ranges (IQR)** to mathematically define the boundaries of normal data distribution.

More advanced techniques employ machine learning algorithms to learn from the data's historical patterns and build dynamic models. These models can adapt to changing trends, making them excellent for identifying subtle, novel anomalies. The goal is to separate the signal (valid data) from the noise (errors or outliers), thus verifying the overall quality and integrity of the dataset.

### Use Cases and Implementation Tips

This technique is crucial in fields where identifying anomalies is critical for operational success and security.

* **Financial Fraud Detection:** Credit card companies analyze spending patterns. A transaction that is unusually large or occurs in a new location is flagged as a potential outlier, triggering a fraud alert.

* **Network Security:** Security systems monitor network traffic. A sudden, massive spike in data transfer from a single IP address can be identified as an outlier, possibly indicating a denial-of-service attack.

* **Manufacturing Quality Control:** A sensor on an assembly line might monitor the weight of a product. If a product suddenly weighs significantly more or less than the established average, it's flagged as an outlier and removed for inspection.

> **Pro Tip:** Balance sensitivity to avoid alert fatigue. A model that is too sensitive will generate too many false positives, causing users to ignore important alerts. Start with a conservative baseline and fine-tune your statistical models using a feedback loop where analysts confirm whether flagged outliers are genuine issues.

## 8. Blockchain-Based Verification

Blockchain technology offers a revolutionary approach to data verification by leveraging a distributed, immutable ledger. This technique creates a secure and transparent record of data transactions, where each new piece of information is cryptographically linked to the previous one, forming a "chain." Because this ledger is distributed across numerous computers in a network, it is incredibly resistant to tampering or unauthorized changes.

The core principle of blockchain-based verification is that once data is recorded, it cannot be altered without altering all subsequent blocks, an act that would require an impossible amount of computing power and consensus from the network. This makes it one of the most robust data verification techniques for scenarios requiring a permanent and auditable history of information.

### How Blockchain-Based Verification Works

Imagine a shared digital notebook where every entry is sealed with a unique, unbreakable lock. Each new entry not only gets its own lock but is also chained to the previous entry's lock. Every participant in the network holds an identical copy of this notebook. If someone tries to change an old entry, they would have to break that entry's lock and every single lock that came after it, all while convincing the majority of participants that their tampered version is the real one.

This decentralized and cryptographically secured structure ensures that the data is both **tamper-evident** and highly available. The **consensus mechanism**, a set of rules by which the network agrees on the validity of transactions, further secures the ledger, making it a trusted source of truth without needing a central authority.

### Use Cases and Implementation Tips

Blockchain is transforming industries where data integrity and traceability are paramount, from finance to supply chain management.

* **Supply Chain Tracking:** Companies use blockchain to track products like pharmaceuticals and food from source to consumer, ensuring authenticity and preventing counterfeits.

* **Academic Credentials:** Universities can issue digital diplomas on a blockchain, allowing employers and other institutions to instantly verify academic achievements.

* **Voting Systems:** Blockchain offers a secure and transparent platform for casting and counting votes, significantly reducing the risk of fraud and enhancing election integrity.

> **Pro Tip:** For enterprise applications, consider a private or consortium blockchain like those from the **Hyperledger Project**. These permissioned networks offer greater control over who can participate, providing a balance between the transparency of a public blockchain and the privacy needs of a business.

## Data Verification Techniques Comparison Matrix

| Item | Implementation Complexity 🔄 | Resource Requirements ⚡ | Expected Outcomes 📊 | Ideal Use Cases 💡 | Key Advantages ⭐ |

|-------------------------------|------------------------------|-------------------------------|---------------------------------------------------|--------------------------------------------------------|------------------------------------------------------|

| Hash Functions (Checksums) | Low - simple algorithms | Low - minimal compute & storage| Fast data integrity verification | Data integrity checks, file verification | Fast, efficient, minimal overhead, widely supported |

| Digital Signatures | High - asymmetric cryptography| Medium-High - key mgmt required| Strong authentication, integrity, non-repudiation | Document signing, software authentication, secure email| Legally binding, strong identity verification |

| Data Profiling | Medium - statistical analysis | Medium - compute & domain expertise| Insight into data quality and anomalies | Data quality assessment, governance | Comprehensive quality insights, anomaly detection |

| Data Validation Rules | Medium - rule configuration | Low-Medium - depends on rule complexity| Prevents invalid data, enforces business logic | Data entry validation, business process enforcement | Customizable, immediate feedback, reduces errors |

| Cross-Referencing | High - multi-source matching | Medium-High - multiple data sources | Detects discrepancies and duplicates | Data integration, migration, identity verification | High accuracy via multi-source validation |

| Checksums & Error Detection Codes | Low - straightforward math | Low - minimal computation | Detects transmission/storage errors | Networking protocols, storage systems | Very fast, hardware accelerated, error detection |

| Statistical Analysis & Outlier Detection | High - statistical & ML models| High - computationally intensive | Identifies subtle anomalies and frauds | Fraud detection, quality control, security | Adaptive, detects unknown patterns, quantitative |

| Blockchain-Based Verification | Very High - decentralized network| High - compute and energy | Immutable audit trail, tamper-evident verification | Supply chain, digital identity, voting systems | Extremely tamper resistant, transparent, decentralized|

## Building a Culture of Verifiable Truth

We've journeyed through a comprehensive toolkit of powerful data verification techniques, from the cryptographic certainty of hash functions and digital signatures to the contextual intelligence of data profiling and cross-referencing. Each method, whether it's the systematic application of data validation rules or the immutable ledger of blockchain, offers a distinct layer of defense against error, manipulation, and falsehood. The central lesson is clear: in an age of information overload, verification is not an optional final step but a foundational principle of credible work.

The power of these methods is not in isolation but in their strategic combination. A single technique can be circumvented, but a multi-layered approach creates a robust and resilient framework for data integrity. Imagine a system where data is first checked with a checksum, then validated against predefined rules, profiled for anomalies, and finally anchored with a digital signature. This "defense-in-depth" strategy transforms data management from a reactive cleanup process into a proactive system of assurance.

### Synthesizing Your Verification Strategy

Moving forward, the challenge is to translate this knowledge into practice. The choice of which **data verification techniques** to implement depends entirely on your specific context, balancing the need for security, the cost of implementation, and the complexity of the data itself.

* **For High-Stakes Financial Data:** A combination of **Digital Signatures**, strict **Data Validation Rules**, and **Blockchain-Based Verification** provides the highest level of security and non-repudiation.

* **For Large-Scale Academic Research:** **Data Profiling**, **Statistical Analysis**, and **Cross-Referencing** against established sources are essential for ensuring the quality and validity of research findings.

* **For Journalistic Fact-Checking:** **Cross-Referencing** is paramount, supplemented by **Hash Functions** to ensure digital evidence hasn't been tampered with and **Statistical Analysis** to spot suspicious patterns in datasets.

* **For Everyday Data Integrity:** Implementing **Checksums** and basic **Data Validation Rules** in your workflows can drastically reduce common errors and ensure consistency.

> **Key Takeaway:** The goal is not just to "check" data, but to build an ecosystem where data is trustworthy by design. This involves embedding verification practices directly into the workflows of researchers, journalists, policymakers, and citizens alike.

### The Broader Impact: From Data Points to Decisions

Mastering these **data verification techniques** is about more than just clean spreadsheets and accurate databases. It's about building a foundation of trust that underpins our most critical activities. When data is verifiable, academic research becomes more reliable, journalistic reporting becomes more credible, and policy decisions become more effective and just. It empowers us to hold institutions accountable, to challenge misinformation with evidence, and to collaborate with confidence.

This commitment to verifiable truth is a cultural shift. It requires us to move beyond passive consumption of information and become active participants in its validation. By fostering a culture that values and practices rigorous verification, we equip ourselves and our communities with the tools needed to navigate an increasingly complex information landscape. This is our most effective defense against the erosion of trust and our most powerful tool for building a future based on shared, verifiable reality.

---

Ready to see these principles in action? Explore **Factiii**, a platform built on the very foundation of verification, using crowd-sourced intelligence and transparent sourcing to create a more reliable information ecosystem for everyone. Discover how you can contribute to and benefit from a world of verifiable truth at [Factiii](https://factiii.com).