Top 9 Best Practices in Research for 2025

Research is the engine of progress, but its power comes from its credibility. Every finding, whether it inf... by @outrank | Factiii

Top 9 Best Practices in Research for 2025

Research is the engine of progress, but its power comes from its credibility. Every finding, whether it informs public policy, guides medical breakthroughs, or shapes our understanding of the world, rests on a foundation of trust. Without rigorous, transparent, and ethical methods, even the most promising ideas can crumble under scrutiny, leading to wasted resources and a loss of public confidence. Strong research isn't just about discovering something new; it's about building a case so solid that others can stand on it to reach even higher. This requires a commitment to established principles that ensure every step, from the initial question to the final analysis, is sound.

This guide provides a comprehensive roundup of the essential **best practices in research**. We will move beyond abstract concepts to offer actionable insights and practical implementation details for each core principle. Instead of just telling you *what* to do, we will show you *how* to do it effectively.

You will learn to:

* Formulate precise research questions using the **PICO framework**.

* Conduct a **systematic literature review** to build on existing knowledge.

* Implement robust study designs like **Randomized Controlled Trials (RCTs)**.

* Navigate the **peer review process** with integrity.

* Master **data management** for accuracy and transparency.

From securing ethical approval and calculating proper sample sizes to ensuring your work is reproducible, this listicle is designed as a practical toolkit. It will equip you with the methods needed to produce research that is not only innovative but also reliable, verifiable, and truly valuable to your field and society. Let's explore the pillars of high-quality research.

## 1. PICO Framework for Research Questions

A strong research project is built on the foundation of a clear, focused, and answerable question. The PICO framework is a powerful tool used to construct such questions, ensuring your study has direction and purpose from the very beginning. While it originated in evidence-based medicine, its structured approach is one of the best practices in research for any discipline, helping to translate a broad area of interest into a testable hypothesis.

PICO is an acronym that stands for **Population**, **Intervention**, **Comparison**, and **Outcome**. Breaking down your question into these four components forces you to define exactly what you want to study. This clarity is essential not only for designing your methodology but also for conducting a targeted and efficient literature search.

### How to Apply the PICO Framework

Using PICO helps you move from a vague idea to a specific, manageable research question. Let’s break down each component:

* **P - Population/Patient/Problem:** Who are you studying? Be specific about the group. Instead of "students," a better P might be "underperforming 9th-grade algebra students."

* **I - Intervention:** What is the main action, treatment, or factor you are considering? This could be a new teaching method, a specific medication, or a public policy.

* **C - Comparison/Control:** What is the alternative to your intervention? This could be a placebo, a standard practice, or no intervention at all. This component provides a baseline for measuring effect.

* **O - Outcome:** What do you want to measure, improve, or affect? The outcome must be measurable. Examples include "a reduction in test anxiety" or "an increase in quarterly sales."

For instance, a general education question like "Does technology help in class?" can be refined using PICO: "In **elementary students with reading difficulties (P)**, does using **interactive reading software (I)** compared to **traditional read-aloud sessions (C)** improve **reading comprehension scores (O)**?" This structured question provides an immediate roadmap for the study's design. This meticulous approach to question formulation is a hallmark of high-quality, impactful research.

## 2. Systematic Literature Review Methodology

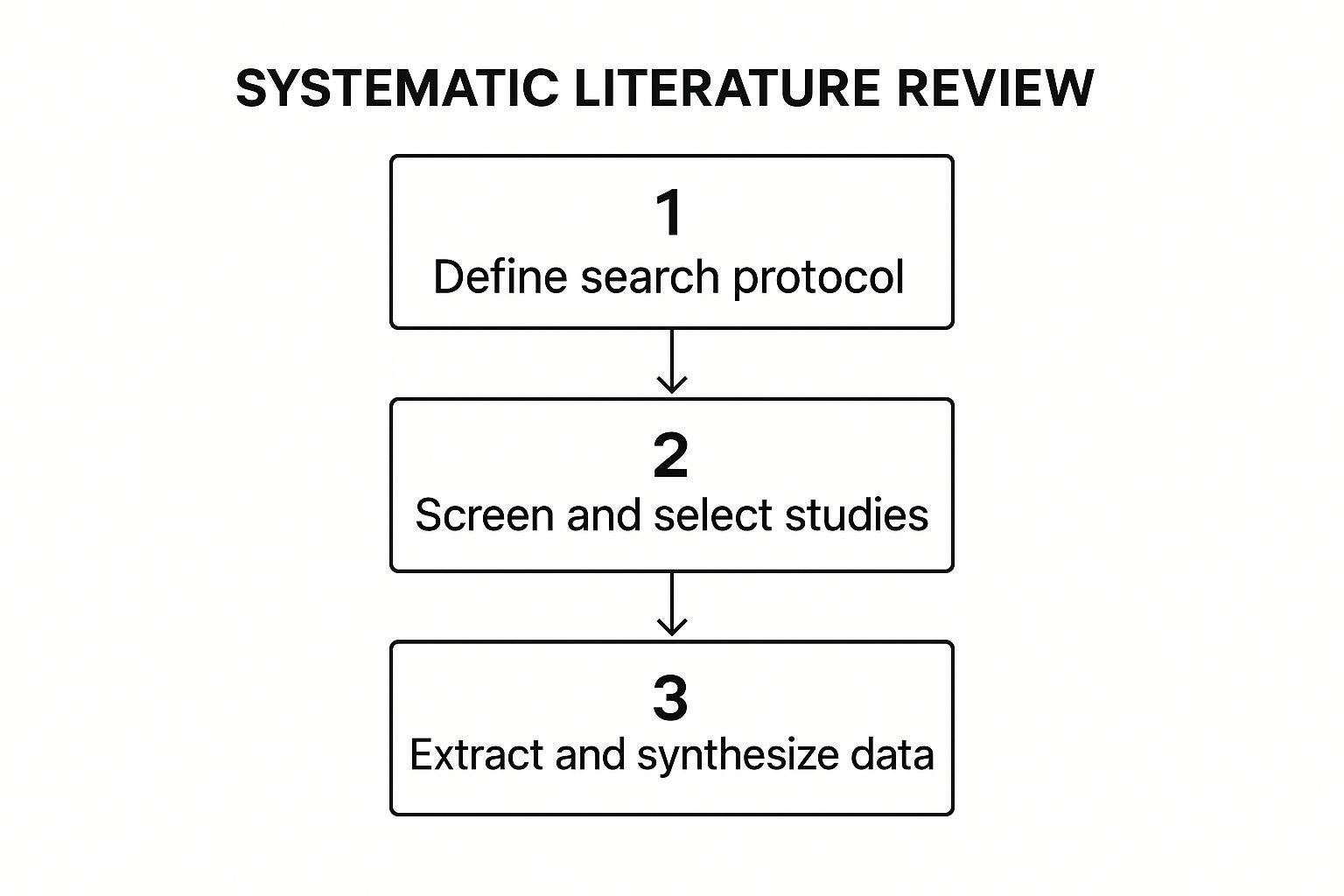

After formulating a precise research question, the next critical step is understanding the existing body of knowledge. A systematic literature review is a rigorous, transparent, and reproducible method for identifying, appraising, and synthesizing all relevant evidence on a specific topic. Unlike traditional reviews, it employs a strict, predefined protocol to minimize bias, making it a cornerstone among the best practices in research for producing high-quality evidence.

This methodical approach ensures that the review's findings are a comprehensive and impartial summary of the current research landscape. Pioneered by organizations like the Cochrane Collaboration, this methodology is now the gold standard in fields ranging from healthcare to social policy, providing a reliable foundation for evidence-based decision-making. The process is defined by its meticulous and exhaustive nature.

This infographic outlines the core workflow of conducting a systematic literature review, breaking it down into three fundamental stages.

The structured flow from defining the protocol to synthesizing data ensures that the process is logical, transparent, and replicable.

### How to Apply Systematic Review Methodology

Executing a systematic review requires careful planning and adherence to a strict protocol. Following established guidelines like PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses) is essential for credibility.

* **Develop and Register a Protocol:** Before starting, clearly define your research question, inclusion/exclusion criteria, and search strategy. Registering your protocol in a public database like PROSPERO adds transparency and prevents duplicate efforts.

* **Conduct a Comprehensive Search:** Use multiple academic databases (e.g., PubMed, Scopus, Web of Science) and grey literature sources to identify all potentially relevant studies. Your search terms should be exhaustive to capture the full scope of research.

* **Screen and Select Studies:** Have at least two reviewers independently screen titles, abstracts, and full texts against the predefined eligibility criteria. This dual-review process minimizes selection bias. Disagreements are resolved through discussion or a third reviewer.

* **Extract Data and Synthesize Findings:** Once final studies are selected, systematically extract relevant data using a standardized form. Synthesize the findings qualitatively (thematic analysis) or quantitatively (meta-analysis) to answer the research question.

For example, a review guided by PRISMA might investigate the effectiveness of a specific mental health intervention. Researchers would search multiple databases, screen thousands of articles, and ultimately synthesize data from a small set of high-quality randomized controlled trials. This deliberate, step-by-step process is what gives systematic reviews their unmatched authority and impact.

## 3. Randomized Controlled Trial (RCT) Design

The Randomized Controlled Trial (RCT) is widely regarded as the gold standard for establishing a cause-and-effect relationship between an intervention and an outcome. This rigorous methodology involves randomly assigning participants to different groups, typically an experimental group receiving the intervention and a control group receiving a placebo or standard practice. This randomization is the key strength of an RCT, as it minimizes selection bias and balances both known and unknown confounding variables across the groups.

By isolating the intervention as the only significant difference between the groups, researchers can confidently attribute any observed differences in outcomes to the intervention itself. This powerful design, pioneered by figures like Ronald Fisher and Austin Bradford Hill, provides the strongest level of evidence for efficacy, making it a cornerstone of evidence-based practice in fields from medicine to public policy. Employing an RCT is one of the best practices in research when your goal is to prove causality.

### How to Implement an RCT

A successful RCT requires careful planning and execution to maintain its integrity. The core principle is to ensure the only variable that differs systematically between groups is the intervention.

* **Randomization:** This is the foundational step. Use a true random method (like a computer-generated sequence) to assign participants to either the treatment or control group. This process should be concealed from those enrolling participants to prevent bias.

* **Control Group:** A well-defined control group is essential for comparison. This group might receive no treatment, a standard existing treatment, or a placebo, providing a baseline to measure the intervention's true effect.

* **Blinding:** Whenever possible, blind participants and researchers to group assignments. Single-blinding means participants don't know their group; double-blinding means neither participants nor researchers know, which prevents bias in behavior and assessment.

* **Outcome Measurement:** Define your outcomes clearly and measure them consistently across all groups. These outcomes should be directly related to the research question and measured at baseline and post-intervention.

For example, to test a new psychological therapy for anxiety, researchers would randomly assign individuals with anxiety to either receive the new therapy (intervention group) or continue with standard counseling (control group). By comparing anxiety levels after the trial period, they can determine if the new therapy is more effective. This methodical approach is critical for producing reliable and actionable findings.

## 4. Rigorous Peer Review Process

The peer review process is a cornerstone of academic and scientific integrity, acting as the primary quality control mechanism for scholarly work. It involves the systematic evaluation of a research manuscript by independent experts, or peers, within the same field before it is accepted for publication. This critical appraisal ensures that research is methodologically sound, original, significant, and adheres to the ethical standards of the discipline. A robust peer review is one of the most vital best practices in research, safeguarding the credibility of the entire body of scientific literature.

This process, first formalized by the Royal Society of London in the 17th century, is fundamental to distinguishing rigorous, evidence-based findings from unsubstantiated claims. It serves not only to validate research but also to improve it, as reviewers provide constructive feedback that helps authors refine their arguments, clarify their methods, and strengthen their conclusions. The ultimate goal is to ensure that published work is a trustworthy contribution to knowledge.

### How to Engage in a Rigorous Peer Review Process

Whether you are an author submitting a manuscript or a researcher acting as a reviewer, understanding the mechanics of the process is crucial for upholding high standards. The core principle is an objective and critical assessment by qualified, unbiased experts.

* **As an Author:** Prepare your manuscript meticulously, anticipating potential critiques. Select journals that are known for their rigorous review standards. When you receive feedback, address each point thoughtfully and systematically, providing clear justifications for any changes made or for disagreeing with a suggestion.

* **As a Reviewer:** Accept invitations to review only when the manuscript falls squarely within your area of expertise. Provide a balanced, constructive, and impartial assessment based on the work's scientific merit, not on personal opinion. Your feedback should be detailed enough to guide the authors in making meaningful improvements.

Different models of peer review exist, each with its own approach to ensuring quality. Traditional **single-blind** or **double-blind** review, where the identity of the reviewer and sometimes the author is concealed, aims to reduce bias. Newer models like **open peer review**, where identities are known and reports may be published, promote transparency and accountability. This commitment to critical evaluation is what maintains the integrity of the research landscape.

## 5. Data Management and Documentation

Beyond collecting data, researchers have a critical responsibility to manage and document it systematically throughout a project's lifecycle. Comprehensive data management ensures the integrity, security, and long-term value of your research assets. This practice is foundational to transparency and reproducibility, serving as the organizational backbone that allows others to understand, verify, and build upon your work.

Effective data management involves the entire process from creation and storage to sharing and preservation. It moves data from being a private, temporary asset to a permanent, verifiable scholarly product. Increasingly, funding bodies like the National Science Foundation and the NIH mandate robust data management plans, underscoring its role as one of the essential best practices in research.

### How to Implement Strong Data Management

Adopting a structured approach from day one prevents confusion, data loss, and credibility issues down the line. Here’s how to put it into practice:

* **Create a Data Management Plan (DMP):** Before collecting any data, outline how you will handle it. A DMP should describe the types of data you'll generate, your standards for metadata, your policies for access and sharing, and your plans for long-term preservation in a repository like Zenodo.

* **Use Consistent Naming Conventions:** Establish and follow a logical system for naming files and folders. A standardized format (e.g., `ProjectName_DataType_YYYY-MM-DD.ext`) makes it easy to locate and version-control your files, preventing accidental use of outdated data.

* **Document Everything:** Maintain a detailed record of your procedures. An electronic lab notebook or a simple "readme.txt" file should accompany your dataset, explaining variable names, units of measurement, data collection methods, and any processing steps.

* **Implement Regular Backups:** Protect your work from hardware failure or corruption. Use the "3-2-1" rule: have at least **three** copies of your data, on **two** different media types, with **one** copy stored off-site (e.g., on a secure cloud service).

For example, a climate science team might use a DMP to specify that all sensor readings will be stored in NetCDF format, named using a `SensorID_Location_Date` convention, and documented in a shared electronic notebook. This ensures every team member understands and can use the data correctly, which is vital for collaborative and long-term research integrity.

## 6. Ethical Review and Approval

Research, especially involving human subjects, is not just about finding answers; it's about doing so responsibly and ethically. Ethical review is a formal process where a study is scrutinized by an independent committee to ensure it upholds the highest ethical standards. This process, managed by bodies like an Institutional Review Board (IRB) or a Research Ethics Committee (REC), is a cornerstone of trustworthy science, designed to protect the rights, safety, and well-being of participants.

This systematic oversight is non-negotiable for most human-centric studies. Rooted in foundational ethical documents like the Nuremberg Code and the Belmont Report, the requirement for ethical approval ensures that the pursuit of knowledge never comes at the cost of human dignity. It's a critical checkpoint that validates a study's integrity before a single piece of data is collected, making it one of the most fundamental best practices in research.

### How to Navigate Ethical Review and Approval

Successfully navigating the ethics approval process requires foresight, transparency, and meticulous planning. It involves demonstrating to a committee that you have thoroughly considered and mitigated any potential risks to participants.

* **P - Prepare and Plan Early:** Don't treat ethics approval as a final step. Begin drafting your application as you finalize your research design. Committees often have specific meeting schedules and long review queues, so submitting early prevents significant delays to your project timeline.

* **I - Inform and Consent:** A core part of your application is the informed consent process. Clearly detail how you will explain the study to participants, including its purpose, procedures, potential risks, and benefits. Your consent forms must be clear, written in accessible language, and explicitly state that participation is voluntary.

* **C - Consider Vulnerable Populations:** If your research involves children, prisoners, pregnant women, or individuals with cognitive impairments, expect a higher level of scrutiny. You must provide a strong justification for their inclusion and detail the additional safeguards you have implemented to protect them from coercion or undue risk.

* **O - Ongoing Monitoring:** Ethical oversight doesn't end once you get approval. You must plan for how you will handle adverse events, manage data confidentially, and report any changes to your protocol. Be prepared to submit progress reports or seek amendments if your methodology evolves.

For example, a study on the psychological effects of social media use among teenagers would require a detailed IRB application. The application would need to outline how researchers will obtain parental consent and teen assent, protect the anonymity of participants' online data, and provide resources for mental health support if any participants report distress. This diligence ensures the research is not only valid but also virtuous.

## 7. Reproducibility and Transparency

For a study's conclusions to be considered credible, its findings must be verifiable. Reproducibility and transparency are the twin pillars supporting this verification process, forming one of the most critical best practices in research. Reproducibility is the ability for an independent researcher to achieve the same results using the original author's data and methods, while transparency involves openly sharing every component of the research lifecycle. Together, they build trust, allow for scrutiny, and accelerate scientific discovery.

Championed by figures like Brian Nosek of the Center for Open Science, this movement addresses the "replication crisis" seen in fields like psychology. By making the entire research process transparent, from hypothesis to analysis, researchers invite collaboration and critique. This openness not only validates the original findings but also allows others to build upon the work, reanalyze data with new techniques, or use the data to answer new questions, ensuring the research has a lasting impact.

### How to Implement Reproducibility and Transparency

Adopting these principles requires a deliberate commitment to openness throughout your research project. Here are actionable steps to make your work more reproducible and transparent:

* **Pre-register Your Study:** Before collecting data, publicly register your research plan, including your hypothesis, methods, and analysis strategy. Platforms like AsPredicted or the Open Science Framework (OSF) host these registered reports. This prevents hypothesizing after results are known (HARKing) and promotes accountability.

* **Share Data and Code:** Whenever ethically and legally possible, make your raw data, analysis scripts, and code publicly available. Use repositories like OSF, GitHub, or Zenodo. This allows other researchers to directly replicate your analysis and verify your findings.

* **Use Version Control:** Employ version control systems like Git to track changes to your code and analysis files. This creates a detailed history of your work, making it easy to understand how your analysis evolved and to revert to previous versions if needed.

* **Document Everything:** Create a "reproducible research compendium" that bundles your data, code, and explanatory documents. Thoroughly comment your code and create a "README" file that explains the project structure and how to run the analysis from start to finish.

A prime example is the Reproducibility Project: Psychology, which attempted to replicate 100 studies, highlighting the importance of this practice. By adopting a transparent workflow, you contribute to a more robust and trustworthy scientific ecosystem, a core tenet of high-quality research.

## 8. Appropriate Statistical Analysis

Choosing the right analytical tools is as critical as collecting high-quality data. Appropriate statistical analysis involves selecting and applying methods that correctly match your research question, study design, and data type. This practice is a cornerstone of research integrity, ensuring that your conclusions are mathematically sound, valid, and defensible against scrutiny.

Adopting the right statistical approach prevents common but serious errors like choosing the wrong test, misinterpreting p-values, or ignoring the assumptions that underpin a statistical model. Pioneers like Ronald Fisher and John Tukey laid the groundwork for modern statistics, emphasizing that the goal is not just to produce a number but to gain genuine insight from data. This disciplined approach is one of the most vital best practices in research for generating reliable and meaningful results.

### How to Apply Appropriate Statistical Analysis

Ensuring your analysis is sound requires careful planning from the outset, not just after data collection. It is a proactive process that safeguards the validity of your findings. Let's break down the key steps:

* **Align Methods with Your Question and Data:** The type of analysis depends entirely on what you want to know and the nature of your data. For example, use a t-test to compare the means of two groups, an ANOVA for more than two groups, and a chi-squared test for categorical data.

* **Pre-Register Your Analysis Plan:** To avoid p-hacking or data dredging, define your statistical plan before you analyze the data. Specify your primary hypotheses, the tests you will use, and how you will handle outliers or missing data. This commitment to transparency enhances credibility.

* **Verify Statistical Assumptions:** Most statistical tests rely on certain assumptions about the data (e.g., normal distribution, homogeneity of variances). You must check if your data meets these assumptions before applying a test. If not, you may need to use a non-parametric alternative or transform your data.

* **Report More Than Just P-Values:** A p-value only tells you about statistical significance; it doesn't indicate the size or practical importance of an effect. Always report **effect sizes** (like Cohen's d or odds ratios) and **confidence intervals** to provide a complete picture of your findings.

For instance, a study comparing two teaching methods shouldn't just state that one was "significantly better" (p < 0.05). A more robust report would state: "Method A resulted in a statistically significant increase in test scores compared to Method B (p = .03), with a medium effect size (d = 0.5), indicating a practically meaningful improvement." This comprehensive reporting is a hallmark of rigorous research.

## 9. Proper Sample Size Calculation

A study's findings are only as reliable as its design, and a critical component of that design is determining the right number of participants. Proper sample size calculation is the process of figuring out the minimum number of subjects needed to detect a statistically significant effect, if one truly exists. This practice is one of the most fundamental best practices in research, ensuring a study has enough statistical power to be meaningful without wasting valuable time and resources on an unnecessarily large group.

Pioneered by statisticians like Jacob Cohen, this method prevents two major pitfalls: underpowered studies that fail to find real effects and overpowered studies that detect trivial differences as statistically significant. An appropriately sized sample provides the statistical muscle needed to draw valid and reliable conclusions, making your research both ethical and efficient.

### How to Apply Sample Size Calculation

Calculating the right sample size involves a balance of statistical inputs. It moves your study plan from a general guess to a calculated, defensible number. Let’s break down the core components:

* **Effect Size:** This is the magnitude of the difference or relationship you expect to find. You can estimate this from prior research, a pilot study, or by defining the smallest effect that would be practically meaningful.

* **Significance Level (alpha):** This is the probability of a false positive, or finding an effect when one doesn't exist. It is typically set at 5% (α = 0.05).

* **Statistical Power (1-beta):** This is the probability of correctly finding a real effect, avoiding a false negative. The standard is usually 80% power.

* **Variability:** This refers to the standard deviation of the outcome measure in your population. Higher variability requires a larger sample size.

For example, a clinical trial testing a new drug for hypertension would calculate its sample size based on the expected reduction in blood pressure (effect size), a 5% risk of falsely claiming the drug works (alpha), and an 80% chance of detecting the reduction if it's real (power). Using power analysis software or formulas, researchers can determine that they need, for instance, 150 participants per group to confidently test their hypothesis. This rigorous approach ensures the study's results are trustworthy and impactful.

## 9 Best Practices Comparison Matrix

| Item | Implementation Complexity 🔄 | Resource Requirements ⚡ | Expected Outcomes 📊 | Ideal Use Cases 💡 | Key Advantages ⭐ |

|-------------------------------|-------------------------------------|------------------------------------|-------------------------------------|-----------------------------------------------------|--------------------------------------------|

| PICO Framework for Research Questions | Low to Moderate | Low | Clear, focused research questions | Formulating focused quantitative research questions | Structured question formulation; improves clarity and reproducibility |

| Systematic Literature Review Methodology | High | High | Comprehensive, unbiased evidence synthesis | Evidence synthesis for decision-making | Minimizes bias; comprehensive evidence base |

| Randomized Controlled Trial (RCT) Design | High | Very High | Strong causal evidence | Testing intervention effectiveness | Gold standard for causation; controls confounders |

| Rigorous Peer Review Process | Moderate | Moderate | Improved research quality and validity | Pre-publication quality control | Validates findings; maintains academic standards |

| Data Management and Documentation | Moderate | Moderate to High | Data integrity and reproducibility | Managing and preserving research data | Ensures data security; facilitates reproducibility |

| Ethical Review and Approval | Moderate | Moderate | Protection of participant rights | Human subjects research requiring ethical oversight | Protects participants; ensures research ethics |

| Reproducibility and Transparency | Moderate to High | Moderate | Verified, trustworthy research | Open science, replication studies | Builds credibility; promotes open data and methods |

| Appropriate Statistical Analysis | Moderate | Moderate | Valid, reliable statistical conclusions | Data analysis for valid inference | Prevents errors; enables proper statistical inference |

| Proper Sample Size Calculation | Moderate | Low to Moderate | Adequate power and resource optimization | Study planning for adequate power | Optimizes resources; improves validity |

## Final Thoughts

Navigating the landscape of modern inquiry demands more than just intellectual curiosity; it requires a disciplined commitment to a structured, ethical, and transparent process. Throughout this guide, we have explored a comprehensive suite of **best practices in research**, moving from the foundational art of crafting a precise research question with the PICO framework to the critical final steps of ensuring reproducibility and applying appropriate statistical analysis. Each practice we've detailed serves as a vital pillar supporting the integrity of your work and, by extension, the collective body of human knowledge.

The journey from a nascent idea to a credible conclusion is intricate. It’s easy to get lost in the complexities of data collection, analysis, or the pressures of publication. This is precisely why internalizing these frameworks is not just an academic exercise but a practical necessity. They are the guardrails that keep your investigation on track, ensuring that your findings are not only sound but also respected and built upon by others.

### Key Takeaways: From Frameworks to Foundational Habits

Let’s distill the core message. The best practices in research are not isolated checkboxes to be ticked off a list. Instead, they represent an interconnected ecosystem of principles that reinforce one another.

* **Clarity from the Start:** A well-defined question using the **PICO framework** prevents wasted effort, focusing your investigation from day one.

* **Building on a Solid Foundation:** A **systematic literature review** ensures you are not reinventing the wheel but are instead contributing meaningfully to an existing conversation.

* **Minimizing Bias:** Methodologies like **Randomized Controlled Trials (RCTs)** and rigorous **sample size calculations** are your primary tools for minimizing bias and ensuring your results are statistically significant, not just random noise.

* **Maintaining Integrity and Trust:** Adherence to **ethical review**, robust **data management**, and a commitment to **transparency** are non-negotiable. They are the bedrock of public and peer trust in your findings.

Ultimately, adopting these practices transforms research from a private pursuit into a public service. It’s the difference between an interesting observation and a verifiable fact, between a personal project and a contribution that can influence policy, inspire new technology, or improve lives.

### Your Next Steps: Putting Best Practices into Action

So, where do you go from here? The most impactful step you can take is to move from passive understanding to active implementation. Don't wait for your next major project to begin.

1. **Conduct a Mini-Audit:** Review a recent or current project. Where could you have applied one of these practices more rigorously? Perhaps your data documentation could be more detailed, or your initial research question could have been refined using PICO.

2. **Focus on One New Habit:** Choose one area to master. If you’re new to systematic reviews, make that the focus of your next literature search. If data management has been an afterthought, create a standardized documentation template for your team.

3. **Become a Champion for Rigor:** Advocate for these standards within your team, lab, or organization. Share this article. Initiate conversations about improving your collective processes, from peer review protocols to data sharing policies.

Mastering the **best practices in research** is an ongoing journey, not a final destination. It requires diligence, a willingness to be critical of one's own work, and a persistent desire for improvement. By embracing these principles, you are not just becoming a better researcher; you are becoming a more trustworthy and impactful steward of knowledge in an age that needs it more than ever. Your commitment to rigor is what elevates your work and ensures it stands the test of time.

---

Are you looking for a powerful tool to streamline the initial stages of your research? **Factiii** uses advanced AI to help you explore topics, identify key entities, and uncover connections you might have missed, making it an invaluable asset for conducting thorough preliminary investigations. Visit [Factiii](https://factiii.com) to see how it can enhance your research workflow today.